Reading view

Installing Void Linux on ZFS with Hibernation Support

Online Age Verification Tools for Child Safety Are Surveilling Adults

Barbara Booth, CNBC:

Civil liberties’ advocates warn that concentrating large volumes of identity data among a small number of verification vendors can create attractive targets for hackers and government demands. Earlier this year, Discord disclosed a data breach that exposed ID images belonging to approximately 70,000 users through a compromised third-party service, highlighting the security risks associated with storing sensitive identity information.

[…]

According to Tandy, as more states adopt age-verification mandates and companies race to comply, the infrastructure behind those systems is likely to become a permanent fixture of online life. Taken together, industry leaders say the rapid spread of age-verification laws may push platforms toward systems that verify age once and reuse that credential across services.

The hurried implementation of age verification sounds fairly terrible, counterproductive, illegal in the U.S., and discriminatory, but we should not pretend that we are only now being subject to risky and overbearing surveillance on the web. The ecosystem powering behavioural ad targeting — including data brokers, the biggest of which have reported staggering data breaches for a decade — has all but ensured our behaviour on popular websites and in mobile apps is already tracked and tied to some proxy for our identity.

That is not an excuse for the poor implementation of age verification, nor justification for its existence. If anything, it is a condemnation of the current state of the web that this barely moves the needle on privacy. If I had to choose whether to compromise for commerce or for the children, it would be the latter, but the correct answer is, likely, neither.

Grammarly’s ‘Expert Review’ Feature Presents Fake Advice in the Names of Real Journalists and Authors

Casey Newton, Platformer:

On Friday I learned to my surprise that I had become an editor for Grammarly. The subscription-based writing assistant has introduced a feature named “expert review” that, in the company’s words, “is designed to take your writing to the next level — with insights from leading professionals, authors, and subject-matter experts.”

Read a little further, though, and you’ll learn that these “insights” are not actually “from” leading professionals, or any human person at all. Rather, they are AI-generated text, which may or may not reflect whichever “leading professional” Grammarly slapped their names on.

Miles Klee, Wired:

As advertised on a support page, Grammarly users can solicit tips from virtual versions of living writers and scholars such as Stephen King and Neil deGrasse Tyson (neither of whom responded to a request for comment) as well as the deceased, like the editor William Zinsser and astronomer Carl Sagan. Presumably, these different AI agents are trained on the oeuvres of the people they are meant to imitate, though the legality of this content-harvesting remains murky at best, and the subject of many, many copyright lawsuits.

I do not think a disclaimer explaining it does “not indicate any affiliation with Grammarly or endorsement by those individuals or entities” will sufficiently distance the company from its claim of providing “insights from leading professionals, authors, and subject-matter experts” attributed to the names of people who did not agree to participate in this. Apparently, it is incumbent upon them to opt out by emailing expertoptout@superhuman.com. Most people will obviously not do this — because why would anyone realize they need to opt out? — but especially those who are dead yet are still being called upon for their expertise. Let Carl Sagan rest.

Apple Used to Design Its Laptops for Repairability

Charlie Sorrel, of iFixit:

Apple’s MacBooks haven’t always been monolithic, barely repairable slabs of aluminum, glass, and glue. They used to be almost delightful in their repairable features, from their batteries to their Wi-Fi cards. Powerbooks, iBooks, and especially early MacBooks showed what happens when Apple applies its design skills directly to repairability and maintenance, instead of to thinness above all. Today we’re going to take a look at the best repairability features that Apple has ditched.

These four complaints range from the somewhat quaint — swappable Wi-Fi cards — to the stuff I actually miss, which is everything else. RAM and disk upgrades are a gimme since the cost-per-gigabyte (generally) declines over time, and I would love easily swappable batteries. But right now, nearly four years into owning this MacBook Pro, I would also really like to be able to swap in a new keyboard in the future. Not only are the keycaps unintentionally becoming polished, some oft-used keys feel a little mushy. Not much, and barely enough to notice, but I imagine their clickiness will not improve over time.

One quibble, emphasis mine:

[…] I have an old 2012 MacBook Air running Linux. I swapped the HDD for an SSD, maxed out the RAM, and dropped in a new battery, and I see no reason it wouldn’t easily keep rolling for another 10 years.

Unlikely. The 2012 MacBook Air only came with an SSD; a standard hard disk was not an option.

Another Appearance Control Is Coming to Accessibility Settings in iOS 26.4

Juli Clover, MacRumors:

Apple renamed the prior Reduce Highlighting Effects Accessibility setting to “Reduce Bright Effects,” and explained what it does.

Apple says the feature “minimizes highlighting and flashing when interacting with onscreen elements, such as buttons or the keyboard.

In my testing, this does exactly what you would expect. In places like toolbar buttons — or the buttons in the area of what is left of a toolbar, anyhow — the passcode entry screen, and Control Centre, the glowing tap effects are minimized or removed.

I do not find those effects particularly distracting, and I think turning them off saps some of the life out of the Liquid Glass design language, but I can see why some would be bothered by them. It is not the case that iOS 26 would be better if none of these appearance controls were present, only that they should not be necessary.

Minister for Innovation, Science, and Economic Development Announces ‘Guardrails’ for TikTok Canada Operations

There are three agreed-upon policies which, in the airy language of a government press release, seem reasonable enough to apply to all social platforms, yet are only relevant to TikTok. The first is exceedingly vague:

TikTok will implement enhanced protection for Canadians’ personal information, including new security gateways and privacy-enhancing technologies to control access to Canadian user data in order to reduce the risk of unauthorized or prohibited access.

There are no details about what the “new security gateways and privacy-enhancing technologies” are, nor why the sole goal is preventing “prohibited access” rather than “exploitative access”.

The second — complying with the recommendations of the Privacy Commissioner — was already underway, and the third is an “independent third-party monitor”, which seems fine.

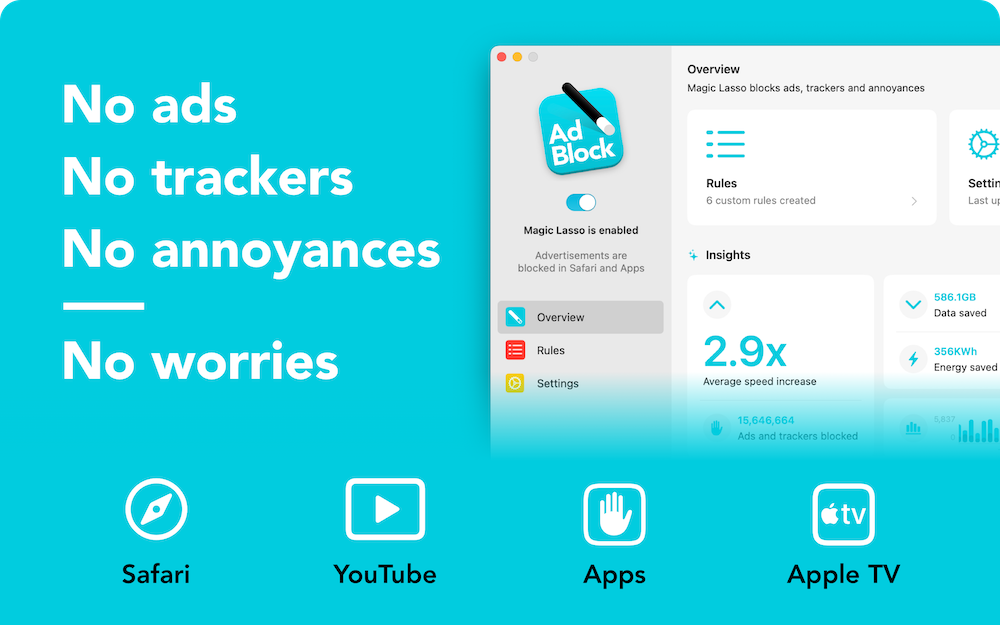

Sponsor: Magic Lasso Adblock: Effortlessly Block Ads on Your iPhone, iPad, Mac, and Apple TV

Do you want an all-in-one solution to block ads, trackers, and annoyances across all your Apple devices?

Then download Magic Lasso Adblock — the ad blocker designed for you.

With Magic Lasso Adblock you can effortlessly block ads on your iPhone, iPad, Mac, and Apple TV.

Magic Lasso is a single, native app that includes everything you need:

Safari Ad Blocking — Browse 2.0× faster in Safari by blocking all ads, with no annoying distractions or pop ups

YouTube Ad Blocking — Block all YouTube ads in Safari, including all video ads, banner ads, search ads, plus many more

App Ad Blocking — Block ads and trackers across the news, social media, and game apps on your device, including other browsers such as Chrome and Firefox

Apple TV Ad Blocking — Watch your favourite TV shows with less interruptions and protect your privacy from in-app ad tracking with Magic Lasso on your Apple TV

Best of all, with Magic Lasso Adblock, all ad blocking is done directly on your device, using a fast, efficient Swift-based architecture that follows our strict zero data collection policy.

With over 5,000 five star reviews, it’s simply the best ad blocker for your iPhone, iPad, Mac, and Apple TV.

And unlike some other ad blockers, Magic Lasso Adblock respects your privacy, doesn’t accept payment from advertisers, and is 100% supported by its community of users.

So, ensure your browsing history, app usage, and viewing habits stay private with Magic Lasso Adblock.

Join over 400,000 users and download Magic Lasso Adblock today.

Mixing News Coverage and ‘Prediction Markets’ Is a Dangerous Gamble

Nilay Patel and Liz Lopatto discussed “prediction markets” on the Verge’s “Decoder” podcast; here is Patel’s summary:

Insider trading is supposed to be illegal, and so is operating an unregulated sports book. So you’re now starting to see Kalshi and Polymarket getting hit from both sides of this broader regulatory debate, and 2026 is shaping up to be the year that all of this really comes to a head. To what end? It’s hard to say, especially as these companies cozy up to the Trump administration.

But it’s also becoming increasingly untenable for prediction markets to sit in the middle of the tension between gambling on the news and trying to self-regulate such that they don’t encourage insider trading.

A little under a month after Gallup announced it would stop polling for presidential approval, the Associated Press said it would begin integrating Kalshi bets into its election coverage. As Patel and Lopatto say, however, election betting is among the least problematic news gambling.

The Central Lie of Prediction Markets

Charlie Warzel, the Atlantic (gift link):

Prediction markets claim to harness the wisdom of crowds to provide reliable public data: Because people are putting real money behind their opinions, they are expressing what they actually believe is most likely to happen, which, according to the reasoning of these platforms, means that events will unfold accordingly. Many news organizations, and Substack, now have partnerships with prediction markets — the subtext being that they provide some kind of news-gathering function. Some users who distrust mainstream media turn to the markets in place of traditional journalism.

But in reality, prediction markets produce the opposite of accurate, unbiased information. They encourage anyone with an informational edge to use their knowledge for personal financial gain. In this way, prediction markets are the perfect technology for a low-trust society, simultaneously exploiting and reifying an environment in which believing the motives behind any person or action becomes harder.

I had no idea so-called “prediction markets” like Kalshi and Polymarket were promoting themselves as forecasters of real information, let alone that anyone believed them. I always assumed “prediction markets” was a euphemism.

A spokesperson for Kalshi told Warzel that betting on current events is a way to “create accurate, unbiased forecasts”, and that is something we can verify. If this were true, bettors should have been able to forecast, for example, the popular vote split of the 2024 U.S. presidential election. Polls had Harris and Trump neck and neck, but on election day, 75.8% of Kalshi bettors believed Harris would prevail. There is not much granularity to Kalshi’s charts, but the forecast on Polymarket was favourable to Harris at 5:00 PM on November 5 — election day — and it flips to a Trump lead at the next available data point, 5:00 AM the following day, and well after it was obvious Trump won the popular vote.

This is just a way to gamble on current events, which is tragic and pathetic. We do not need to pretend these sites are anything more substantial than that.

Google and Epic Games Announce Settlement

Sameer Samat, Google’s president of Android Ecosystem:

Today we are announcing substantial updates that evolve our business model and build on our long history of openness globally. We’re doing that in three ways: more billing options, a program for registered app stores, and lower fees and new programs for developers.

Epic Games CEO Tim Sweeney on X:

Google is opening up Android all the way with robust support for competing stores, competing payments, and a better deal for all developers. So, we’ve settled all of our disputes worldwide. THANKS GOOGLE!

Simon Sharwood, the Register:

Epic Games approved of the changes.

“These changes will evolve Android into a true open platform with competition among stores,” the company stated. “Globally, developers will have choices in how they make payments using Google Play’s payment system and competing payment systems, with reduced fees and the ability to point users outside apps to make purchases.

Epic also said “Google will take steps to support the future open metaverse,” a probable reference to the deal that will see games made with the Unity engine made available within Fortnite.

Neither Sweeney nor Epic Games can express anything less than elation with this outcome in no small part because they signed away their ability to do that. It still amazes me that concession ended up in the final agreement. It seems like the kind of thing that Google’s very expensive lawyers would pitch as leverage with Epic Games’ not-quite-as-expensive lawyers. In an interview with Dean Takahashi, of GamesBeat, it seems like Epic was eager to settle with terms that apply worldwide:

Asked why Sweeney decided to settle rather than litigate in every court in the world, he said, “This is just a really important thing that people should understand. The Epic versus Google court decision in the United States only has effect in the United States. It does nothing about the rest of the world. And the United States is about 30% of Google Play revenue and about 5% of Google Play users.”

He said it was never going to be a complete worldwide solution, and the court, throughout the proceedings, very clearly, said that the court wanted to establish competition among stores and competition among payments without setting prices in the market.

Curiously, not long before this settlement, Google announced it would begin requiring Android developers to be verified for their software to be installable, even by side-loading. I am curious if the combination of these changes meaningfully impacts users’ security or privacy. At a glance, the changes that settled this lawsuit seem like a welcome set of improvements that, sure, was assuredly not an altruistic fight by Epic Games, and will probably result in Sweeney getting even richer.

Regardless, it is notable for these sweeping changes to be brought to Android phones worldwide in the coming years, while Apple’s App Store is a patchwork of region-specific policies difficult for developers to navigate. It is too bad there is not really competition between these stores. Most people who buy smartphones choose the platform as a whole and accept whatever software experience they are provided. They do not need to bother themselves with the business terms of each store. With the improvements to third-party stores on Android, it sets up the possibility for greater competition within that platform. Apple should do the same.

Apple’s New Studio Displays, Plural

In hardware terms alone, Apple has been delivering an incredible run of Macs arguably since 2020, and easily since 2021. There are quibbles, sure — the display notch still bugs some people, the keyboard material wears poorly, and repairability has declined — but these are, overall, pretty sweet machines. The Macs announced this week seem like they will continue that hot streak.

I happen to be in the market for a new Mac, perhaps this year, and I should be spoiled for choice. I kind of am — the Mac Mini and Mac Studio are both alluring. But I am sadly attached to the room offered by my beloved 27-inch iMac, and Apple’s new lineup of displays is a sore point.

Stephen Hackett, 512 Pixels:

Yes, those are two different products, but they both feature 27-inch, 5K displays in the same enclosure as the previous Studio Display.

Starting at $1599, the new Studio Display is a slight upgrade to the 2022 model.

[…]

The much more interesting of the pair is the $3299 Studio Display XDR.

Those prices are, respectively, $2,100 and $4,500 in Canada. I am not a stranger to spending a lot of money on a screen — I bought a Thunderbolt Display at $1,000 — but that is a lot of money for even the basest of base models, especially since I have no idea whether the sketchy firmware issues have been resolved.

It is not that these displays are bad — far from it — but it is extraordinary that we are ten years removed from 27-inch Retina iMacs that started at just $200 more than the Studio Display is today. Only recently are we seeing more choice in 27-inch 5K displays at considerably lower prices, though without Apple’s very nice stand and quality of materials. At least the XDR has a seemingly new panel.

Three of the seven models in the Mac lineup require an external display. Apple has two choices: one really advanced one that costs as much as a generously-specced Mac Studio, and another that feels like it is stumbling along.

Anyway, here I go again looking for a sick deal I will not find on a Pro Display XDR. Those things really hold their value. Pity.

Tim Sweeney Is Contractually Prohibited from Criticizing Google’s Developer Terms for Years

Sean Hollister, the Verge:

But Google has finally muzzled Tim Sweeney. It’s right there in a binding term sheet for his settlement with Google.

On March 3rd, he not only signed away Epic’s rights to sue and disparage the company, he signed away his right to advocate for any further changes to Google’s app store polices. He can’t criticize Google’s app store practices. In fact, he has to praise them.

The terms (PDF) helpfully clarify that Epic is still allowed to “advocat[e] changes to the policies or practices of […] other companies, including Apple”. This does not mean future criticism of Apple’s business practices — or past criticism, for that matter — is unwarranted or invalid, but it now carries the blunted quality of someone who is not allowed to make the same complaints about Google.

A Toolkit for Hacking iPhones, Possibly Created for the U.S. Government, Has Leaked

Google’s Threat Intelligence Group:

Google Threat Intelligence Group (GTIG) has identified a new and powerful exploit kit targeting Apple iPhone models running iOS version 13.0 (released in September 2019) up to version 17.2.1 (released in December 2023). The exploit kit, named “Coruna” by its developers, contained five full iOS exploit chains and a total of 23 exploits. The core technical value of this exploit kit lies in its comprehensive collection of iOS exploits, with the most advanced ones using non-public exploitation techniques and mitigation bypasses.

The Coruna exploit kit provides another example of how sophisticated capabilities proliferate. Over the course of 2025, GTIG tracked its use in highly targeted operations initially conducted by a customer of a surveillance vendor, then observed its deployment in watering hole attacks targeting Ukrainian users by UNC6353, a suspected Russian espionage group. We then retrieved the complete exploit kit when it was later used in broad-scale campaigns by UNC6691, a financially motivated threat actor operating from China. […]

Andy Greenberg, Wired:

Conspicuously absent from Google’s report is any mention of who the original surveillance company “customer” that deployed Coruna may have been. But the mobile security company iVerify, which also analyzed a version of Coruna it obtained from one of the infected Chinese sites, suggests the code may well have started life as a hacking kit built for or purchased by the US government. Google and iVerify both note that Coruna contains multiple components previously used in a hacking operation known as “Triangulation” that was discovered targeting Russian cybersecurity firm Kaspersky in 2023, which the Russian government claimed was the work of the NSA. (The US government didn’t respond to Russia’s claim.)

I am so curious to know how this thing made it outside the carefully guarded digital walls of the U.S. government or a contractor. While a rare event, it is not the first time the classified weapons of espionage have become public.

U.S. Immigration Police Bought Real-Time Ad Bidding Data for Automated Tracking System

Joseph Cox, 404 Media:

Customs and Border Protection (CBP) bought data from the online advertising ecosystem to track peoples’ precise movements over time, in a process that often involves siphoning data from ordinary apps like video games, dating services, and fitness trackers, according to an internal Department of Homeland Security (DHS) document obtained by 404 Media.

[…]

Although CBP described the move as a pilot, the DHS Office of the Inspector General (OIG) later found both CBP and ICE did not limit themselves to non-operational use. The OIG found that CBP, ICE, and the Secret Service all illegally used the smartphone location data, and found a CBP official used the data to track coworkers with no investigative purpose. CBP and ICE went on to repeatedly purchase access to location data.

There are people out there who will insist, to this day, that behaviourally targeted advertising is not actually a mechanism for surveillance despite all the evidence showing it is, in fact, an essential component.

Annotators in Kenya Describe How They Review Sensitive Data Captured by Meta’s Ray-Bans

Naipanoi Lepapa, Ahmed Abdigadir, and Julia Lindblom, Svenska Dagbladet:

The workers in Kenya say that it feels uncomfortable to go to work. They tell us about deeply private video clips, which appear to come straight out of Western homes, from people who use the glasses in their everyday lives.

Several describe video material showing bathroom visits, sex and other intimate moments.

Another worker talks about people coming out of bathrooms.

It is appalling that massively rich corporations like Meta continue to offload critical tasks like these onto people who receive little support or pay. I recently finished “Ghost Work” by Mary L. Gray and Siddharth Suri and, while not my favourite book nor surfacing anything conceptually new, is worth your time. Meta can and should be doing far better, but can avoid association with labour atrocities better than, say, Nike in the 1990s in part because I doubt most people think too much about human intervention in artificial intelligence. Meta does not celebrate the hard work of its contract labour in Kenya; it does not even acknowledge them.

Speaking of not acknowledging the human labour involved, this story is the obvious nightmare you would expect. Some of these incidents of sensitive video recordings appear to be accidental, while others are seemingly deliberate. Without excusing the people who seem to be recording creepy videos on purpose, I assume few people would have believed it would be seen by someone at a company they probably have not heard about.

At first glance, it appears that we have significant control over our data. It states that voice recordings may only be saved and used for improvement or training of other Meta products if the user actively agrees.

But for the AI assistant to function, voice, text, image and sometimes video must be processed and may be shared onwards. This data processing is done automatically and cannot be turned off.

This is the kind of thing I would expect would be bundled into the additional diagnostic information Meta asks if you would like to opt into sharing. But Meta says this “does not include the photos and videos captured by your glasses”. That is, as this investigation found, part of the mandatory data collection.

This is offensive on behalf of users who might be less likely to consent if they had this full information. But it is also offensive to their romantic partners, friends, acquaintances, and passers-by, none of whom agreed to have their image or conversations adjudicated by these contractors.

⌥ The Window Chrome of Our Discontent

In a WWDC 2011 session, Dan Schimpf explained some of the goals of the refreshed design for Aqua in Mac OS X Lion were “meant to focus the user attention on the active window content”. This sentiment was echoed by John Siracusa in his review of Lion for Ars Technica:

Apple says that its goal with the Lion user interface was to highlight content by de-emphasizing the surrounding user interface elements.

When Apple redesigned Mac OS X again in 2014 with Yosemite, it promised…

[…] a fresh modern look where controls are clearer, smarter and easier to understand, and streamlined toolbars put the focus on your content without compromising functionality.

Then, when it revealed the Big Sur redesign in 2020, it explained:

The entire experience feels more focused, fresh, and familiar, reducing visual complexity and bringing users’ content front and centre.

And you will never guess what it promised in 2025 with the announcement of MacOS Tahoe and Liquid Glass, as introduced by Alan Dye:

Our goal is a beautiful new design that brings joy and delight to every user experience. One that’s more personal, and puts greater focus on your content — all while still feeling instantly familiar.

It is not just Apple, either. Here is Microsoft’s Jensen Harris at Build 2011 describing a key goal for the company’s then-new Metro design language:

Metro-style apps have room to breathe. They’re not about the chrome, they’re about the content. […] For years, Windows was always about adding stuff. We added bars, and panes, and doodads, and widgets, and gadgets, and bars — and stuff everywhere. And that’s how we defined our U.I., based on what new widgets we added. Now, we’ve receded into the background, and the app is sitting out there on the stage.

And later, as Microsoft rolled out app updates with its Fluent Design language, it described them in familiar terms:

With the updated OneDrive, your content takes center stage. The improved visual design reduces clutter and distractions, allowing you to focus on what’s important – your content.

This is a laudable goal if the opposite is, I assume, increasing the amount of clutter in user interfaces and making them more distracting. Nobody wants that. Then again, while the objective may be quite reasonable, there are surely different ways of achieving it — but Apple has embraced a single strategy: make the interface blend into the document. (I will be focusing on MacOS here as it is the platform I am most familiar with.)

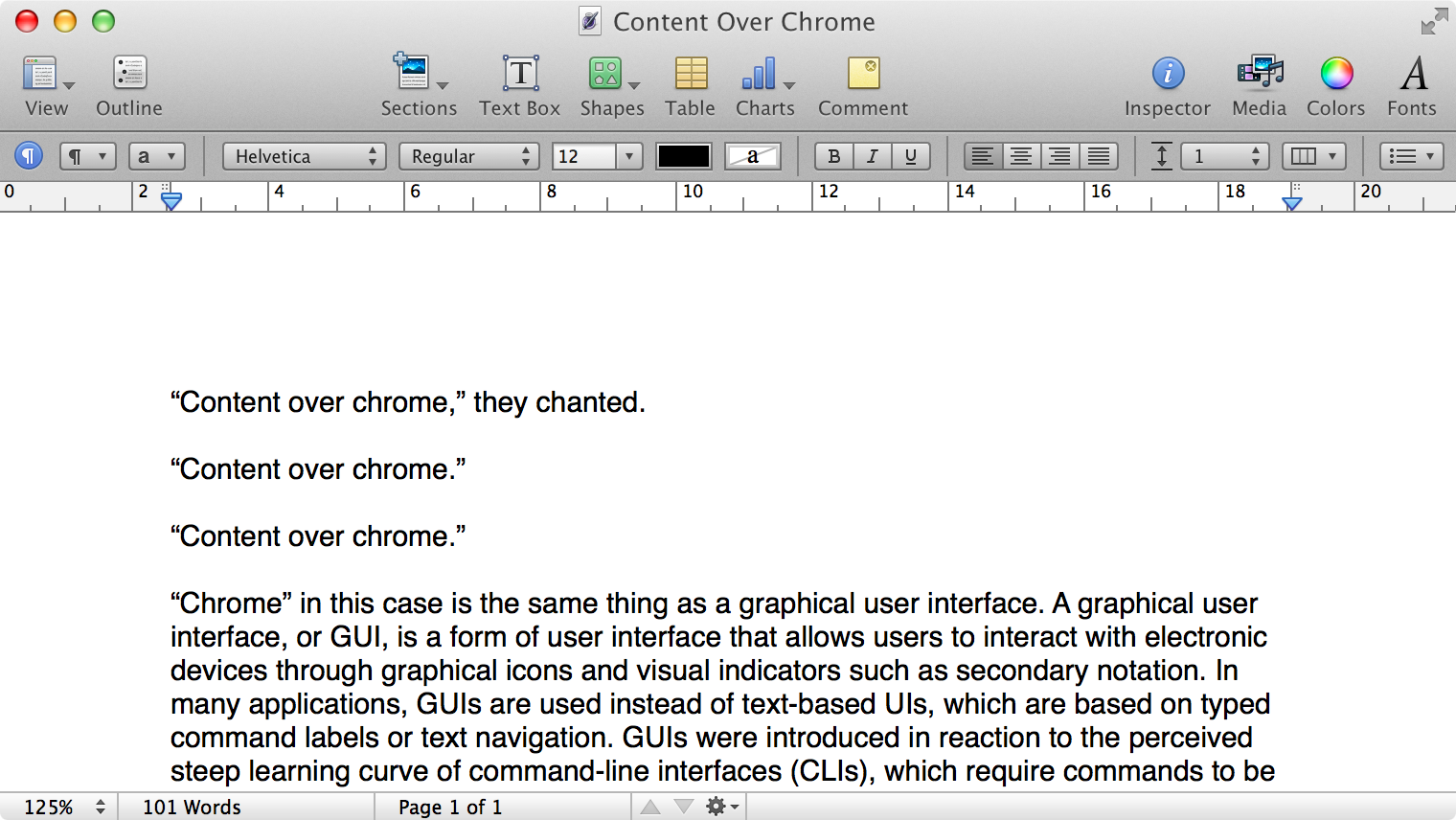

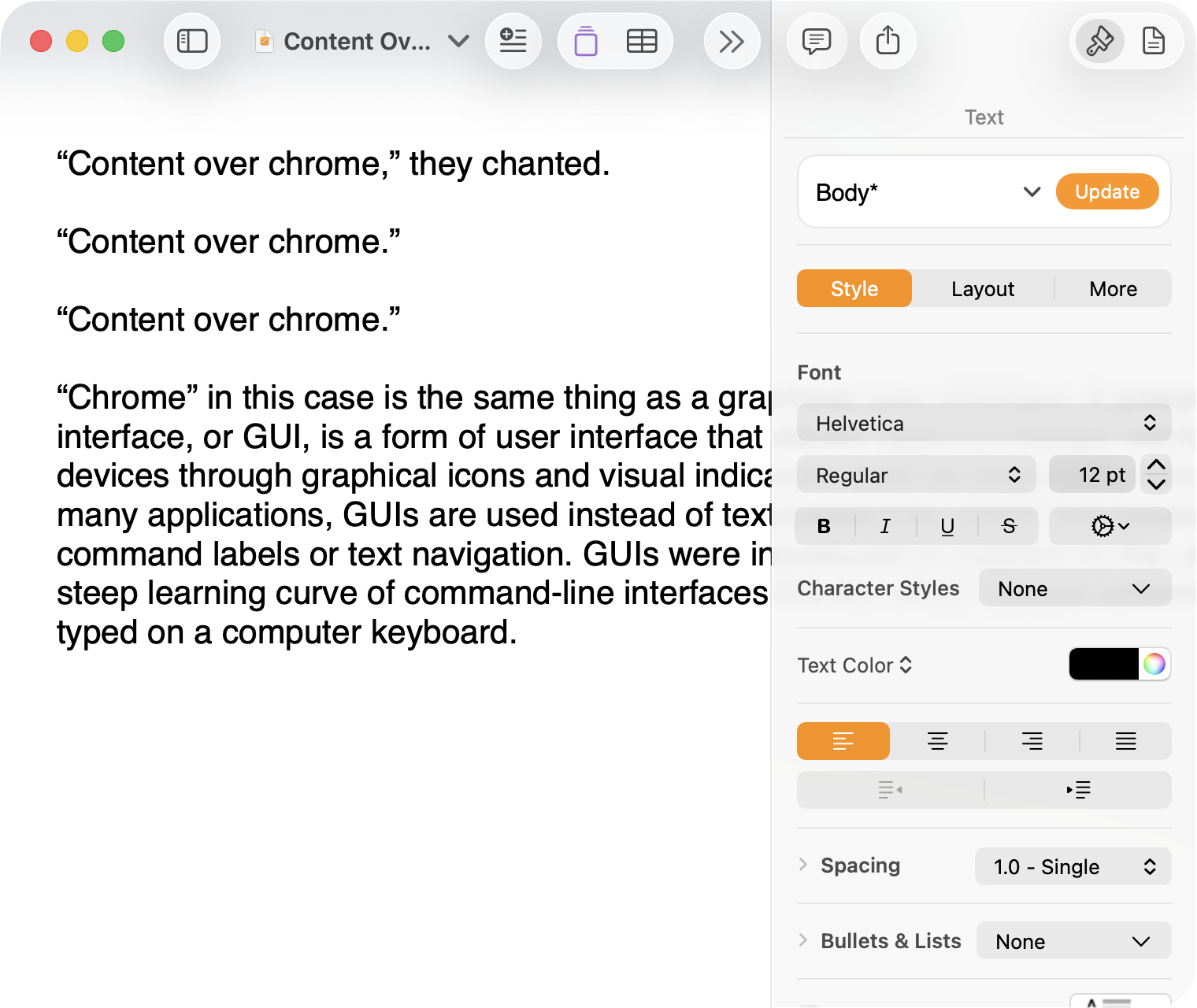

Here is what a Pages document looks like running under Mac OS X Lion:

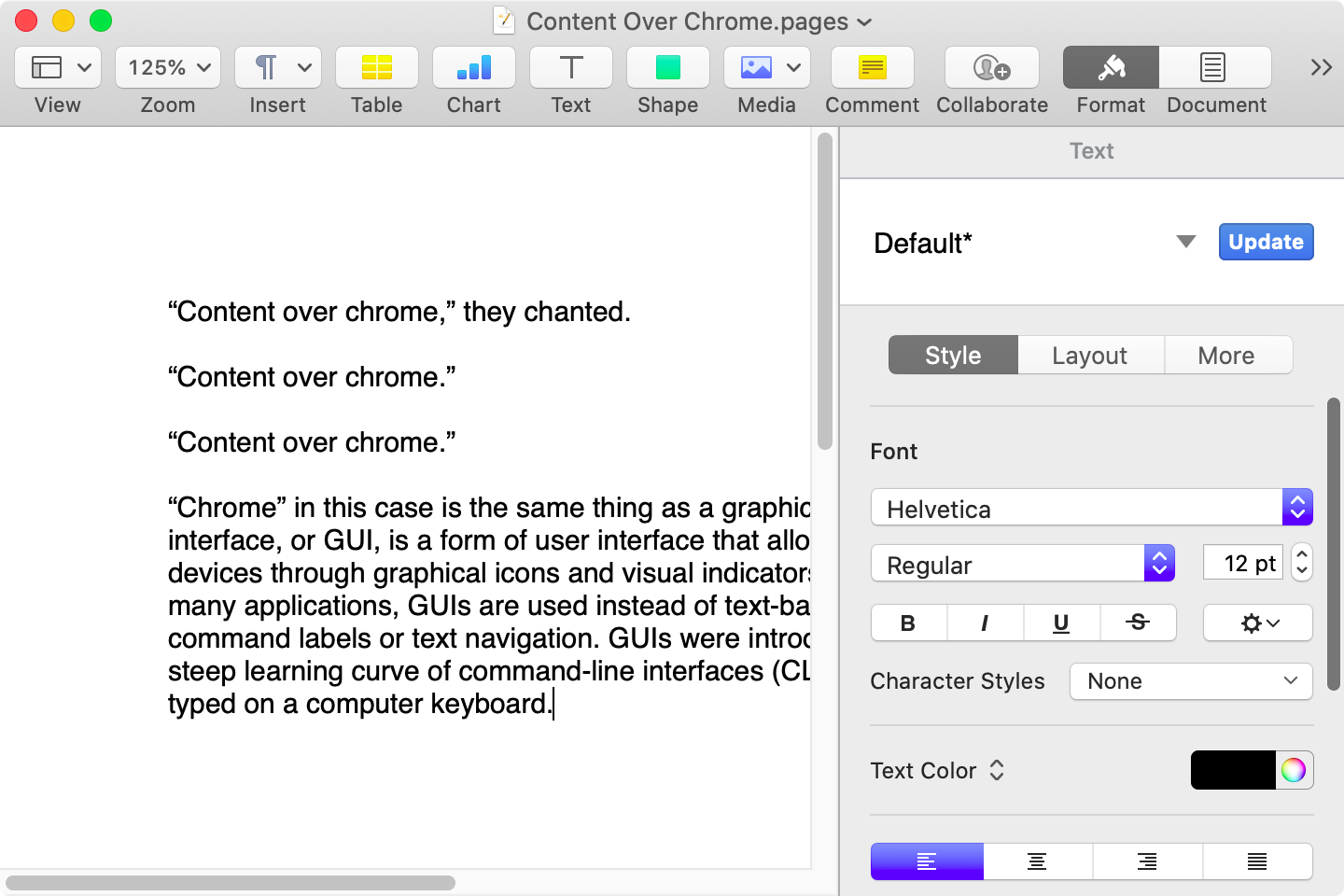

Here is that same document in a newer version of Pages running on MacOS Catalina, with the Yosemite-era design language that replaced the one that came before:

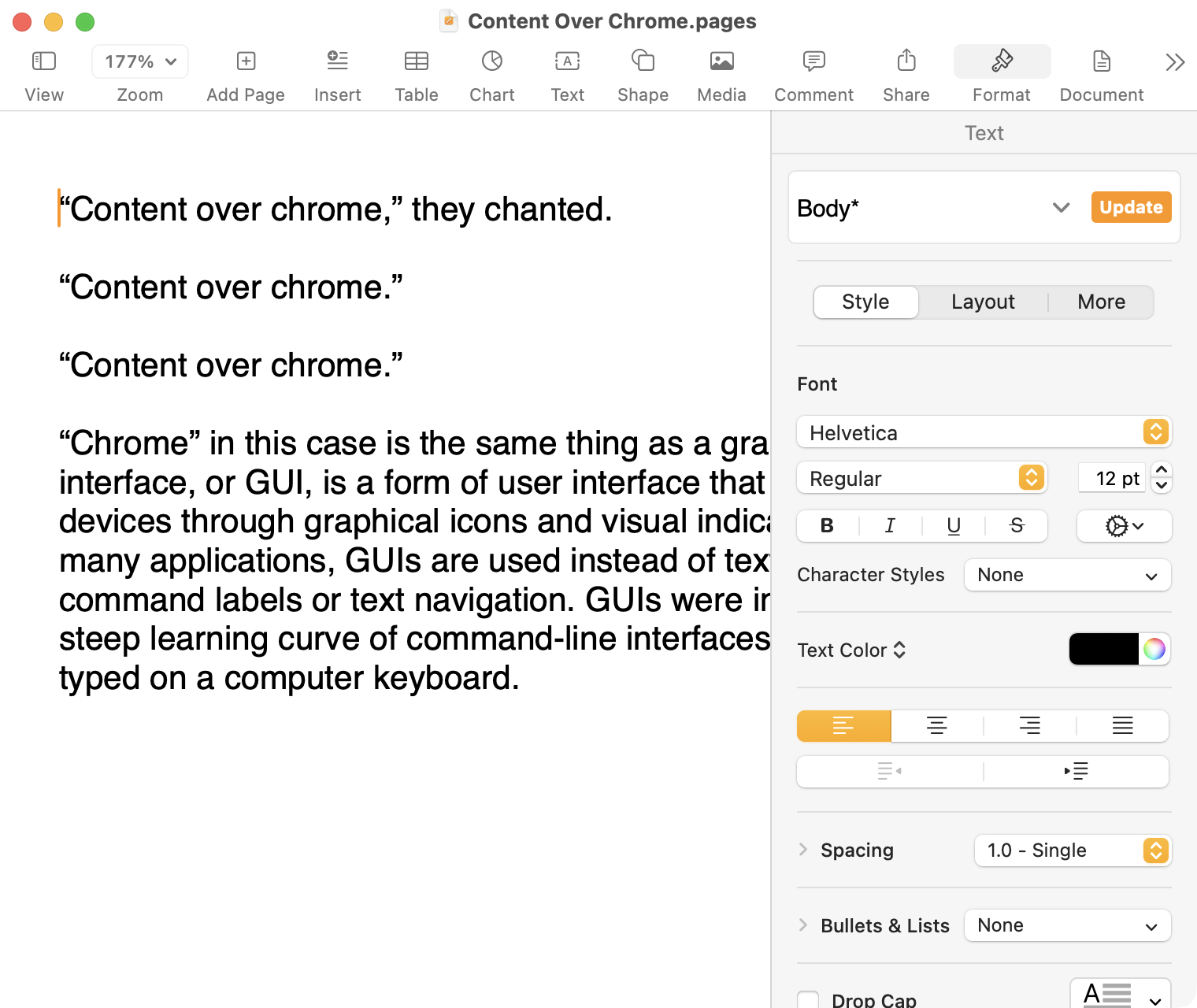

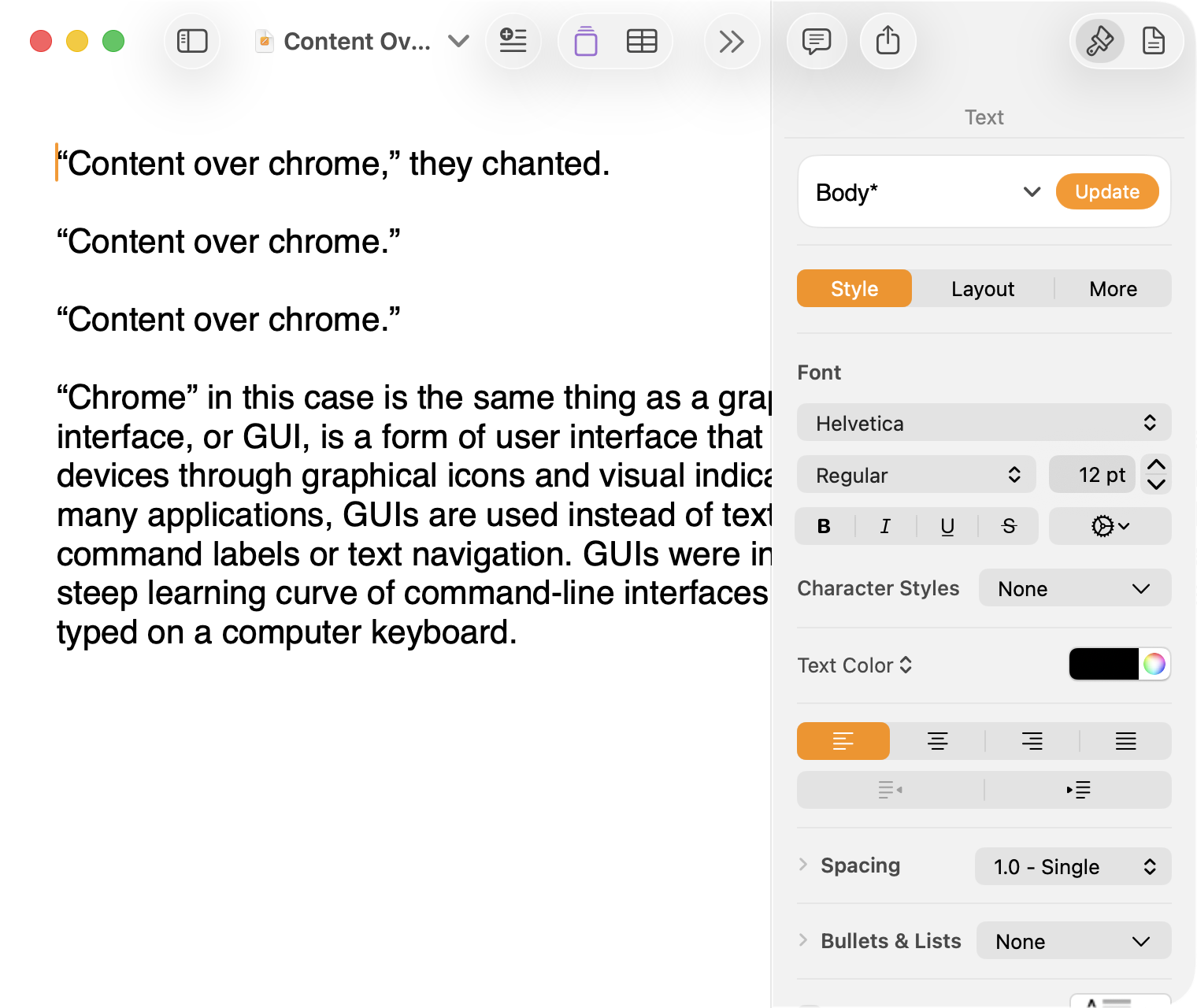

Here it is in the last version of Pages on MacOS Tahoe, using the design language introduced with Big Sur:

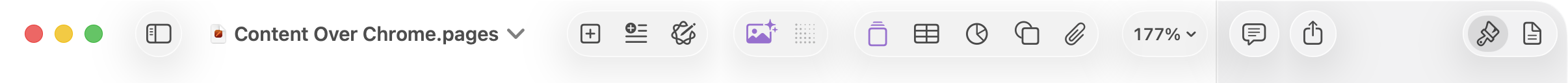

And, finally, the newest version of Pages on MacOS Tahoe using the current Liquid Glass visual design language:

There are welcome improvements in newer versions of this comparison, like the introduction of the “Format” panel on the right-hand side, which makes better use of widescreen landscape-oriented displays, and allows for larger controls. While I admire the density of the Lion-era screenshot, the mini-sized controls in that formatting menu are harder to click.1

Overall, however, what Apple has done to Pages over this period of time is representative of a broader trend of minimizing the delineation of user interface elements from each other and the document itself. This is the only tool in the toolbox, and I am skeptical it achieves what Apple intends.

Compare again the two more recent screenshots against the ones that came before, and focus on the toolbar at the top of each. In the older two, there is a well-defined separation between the toolbar — the window itself — and the document. In the Big Sur visual language, however, the toolbar is the same bright white as the document. By Tahoe and the Liquid Glass language, there is barely a distinction; the buttons simply float over the document. And, bizarrely, that degrades further with the “Reduce Transparency” accessibility preference enabled:

(Also, no, your eyes do not deceive you: the icons in the drop cap menu, barely visible in the lower-right, are indeed pixellated.)

For me, this means a constant distraction from my document because the whole window has a similar visual language. As the toolbar and its buttons become one with the document, they lose their ability to fade into the background. In the two older examples, the contrast of the well-defined toolbar allows me to treat them as an entirely separate thing I do not need to pay attention to.

This is further justified by the lower contrast within those two older toolbars. In Lion, the grey background and moderately saturated colours are a quiet reminder of tools that are available without them being intrusive. The mix of shapes is a sufficient differentiator, something Apple threw away in the following screenshot. By making all the buttons literal and with the same bright background, the toolbar becomes a little more distracting — but at least it does not blend into the document. Without the context of the previous screenshot, the colours of each icon seem almost random, and I find the yellow-on-white “Table” button difficult to distinguish at a glance from the black-on-yellow-on-white “Comment” button.

The Big Sur-era design language is, frankly, an atrocious regression. The heterogeneous shapes may have returned, but in the form of monochromatic medium-grey icons set against a uniform white background. The icons are not bad, per se — though putting “Add Page” and “Insert” next to each other in this default toolbar layout, both represented by a plus sign, is a little confusing. But I will bet you would not guess that some of these are buttons, while others are pop-up buttons with a submenu.

Finally, there is Liquid Glass which, in its default form, has more contrast than the previous example; with “Reduce Transparency” enabled, which is how I use MacOS, it has even less. The buttons themselves have a greater amount of internal contrast with bigger, darker grey icons on a white background. This is preferential within the context of the toolbar compared to the thin, small, and low-contrast buttons in the past example, but it also means this toolbar has similar contrast to the document itself.

I would not go so far as to argue that Pages ’09 has a perfect user interface and that everything since has been a regression. The average colours used for the icon fill in both older toolbars generally fails accessibility contrast checks which, remarkably, the Big Sur design will pass. The icons in Pages ’09 rely on dark outlines and unique shapes to have sufficient contrast with the toolbar background. However, Apple has since discarded most variables it could change to design these interfaces. Every button contains an icon of a single uniform colour, within barely defined holding containers of the same shape, and without text labels by default.

This monochromatic look means any splash of colour is distracting. The yellow accent used in Pages is garish — though, thankfully, something that can mostly be mitigated by changing the Theme Colour in System Settings, under Appearance. (Unfortunately, the yellow background remains on the “Update” button in the most recent version of Pages regardless of the system accent colour.) But perhaps you also noticed the purple icon in the Liquid Glass screenshot above. Here is the full toolbar:

Those purple icons signify features that are part of Apple Creator Studio, a paid subscription to Pages and other applications that allows you to — in the order they are presented above — generate an image, artificially boost the resolution of an image, and access a stock image library. If you would like to insert one of your own images into your Pages document, that feature has been moved to the paperclip icon. Yes, it is a menu and not a button, despite lacking the disclosure triangle of the zoom menu right beside it, and it also reminds you about the “Content Hub” and “Generate Image” features. In Pages under Lion, colour was used in the icons to help guide the user as they complete a task — click the green thing to add a shape; click the darker yellow thing to add a table. Colour is not being used in the newer version to signify these are A.I. features, as the “Writing Tools” icon remains dark grey. In this version, the coloured icons are there to guide the user to premium add-ons regardless of whether they are currently paying for them.

I decided to focus on Pages for this comparison because it has lived so many different lives in MacOS. However, it is perhaps an imperfect representation for the rest of the system. Across Mac OS X Lion, for example, the toolbars of first-party applications like Finder and Preview almost exclusively use monochromatic icons. This has been true since Mac OS X Leopard, which also introduced barely differentiated folder icons. Some toolbars in Tiger, introduced two years prior, featured icons inside uniform capsule shapes. These were questionable ideas at the time, but they still retained defining characteristics. The capsules, for example, may have had a uniform shape, but contained within were full-colour icons. Most importantly, they were all clearly controls that were differentiated from the document.

Perhaps Apple has some user studies that suggest otherwise, but I cannot see how dialling back the lines between interface and document is supposed to be beneficial for the user. It does not, in my use, result in less distraction while I am working in these apps. In fact, it often does the opposite. I do not think the prescription is rolling back to a decade-old design language. However, I think Apple should consider exploring the wealth of variables it can change to differentiate tools within toolbars, and to more clearly delineate window chrome from document.

-

These screenshots are a bit limited as, to capture a high-resolution interface, I switched my mid-2012 MacBook Air to a 720 × 450 display output, which shrank the available space for Pages in the Lion and Catalina screenshots. ↥︎

Software Quality Postscript and Clarification

I have a document open in BBEdit right now named “2025-06-22 – MacOS SaaS.markdown”. I started drafting this thing last year about how Apple has transitioned its operating systems to something closer to a software-as-a-service model. I was trying to describe how the difference between major versions has become generally more modest since many features are rolled out across the year, and how — particularly on Apple’s non-Mac platforms — updates are more-or-less forced since the company stops digitally certifying older versions.

It is not a perfect comparison and not quite a fully-developed idea — note the difference between the filename and the last sentence above — but I thought it was going somewhere. Of course, you had no idea about this because I never published, which is why it must have seemed strange when I dropped a reference to software-as-a-service in the middle of my piece about software quality:

There was a time when remaining on an older major version of an operating system or some piece of software meant you traded the excitement of new features for the predictability of stability. That trade-off no longer exists; software-as-a-service means an older version is just old, not necessarily more reliable.

Riccardo Mori was understandably confused by this:

[…] I very much enjoy using older Mac OS versions, but not being able to browse the Web properly and securely, not being able to correctly sign in to check a Gmail account, not being able to fetch some RSS feeds because you can’t authenticate securely or establish a secure connection is very frustrating. Not having Dropbox work on my 2009 MacBook Pro running OS X 10.11 El Capitan is a minor annoyance and means I just won’t have access to certain personal files and that I’ll have to sync manually whatever I do on this other machine.

But if I put these two factors aside, there’s nothing about those older Macs, nothing about the older Mac OS versions they run that makes them less reliable. […]

What Mori explains as this paragraph continues is what I had meant to write at the time. What I should have written was this (emphasis mine):

There was a time when remaining on an older major version of an operating system or some piece of software meant you traded the excitement of new features for the predictability of stability. That trade-off no longer exists; an operating system on a software-as-a-service treadmill means an older version is just old, not necessarily more reliable.

The cycle of having a major new version ready to preview by June and shipping in September means the amount of time Apple spends focusing on the current version must necessarily shrink. How many teams at the company do you suppose are, right now, working on MacOS 26 when WWDC is a little over three months away? Engineering efforts are undoubtably beginning to prioritize MacOS 27. There are new features to prepare, after all.

So, yes, what Mori writes is what I was trying to express. I wish I had given that sentence a little more thought. Do read Mori’s piece — the second part, “On Software Frugality”, is thought-provoking.

The Perfect Music App

Jon Hicks, last year:

Music apps leave me wanting.

While I collect albums both physically (Vinyl + CD) and digitally (from Bandcamp), there are still missing pieces that streaming services provide: discovering new music, sharing playlists and seeing what friends are playing so that I can try their recommendations. They’re a valuable part of my listening habits, but none of them feel like ‘the one’. […]

I only stumbled across this today, but it remains a wonderful encapsulation of the state of music apps today. I share Hicks’ criteria, though I would add three things for myself:

More expansive metadata. I would like genres that work more like tags. An artist may generally make records in one genre, but different albums have different influences. Even individual songs may considerably differ in sound and style. This is the kind of thing that would help me make playlists or find songs that sound better together.

This would be a management challenge across the tens of thousands of songs in my library, but I feel like integration with RateYourMusic and other databases might help partially automate this.

iPhone syncing over a wire. One of Hicks’ criteria is streaming and local library in the same app, and I completely agree. But I do not want anything — especially iPhone syncing — to be predicated on an assumption I have Apple’s first-party iCloud Music stuff turned on.

No lock-in. I want to be able to point it at my existing library and for things to just work. I would like to be able to import my entire setup from Music — all my playlists, including smart playlists, plus all my stats and ratings — and I would like it to be stored in a format some other application could read if I ever need to move to a different client in the future.

There are many indie apps that get close to this. I checked out Radiccio recently, but it unfortunately does not work with the iMac on which my music library is stored. Maybe that is the fourth criteria: backwards compatibility as far as possible.

Nobody has ever said I am easy to please.

The Political Effects of Twitter’s Feed Algorithm

Germain Gauthier, et al., in a recent peer-reviewed paper in Nature:

Feed algorithms are widely suspected to influence political attitudes. However, previous evidence from switching off the algorithm on Meta platforms found no political effects. Here we present results from a 2023 field experiment on Elon Musk’s platform X shedding light on this puzzle. We assigned active US-based users randomly to either an algorithmic or a chronological feed for 7 weeks, measuring political attitudes and online behaviour. Switching from a chronological to an algorithmic feed increased engagement and shifted political opinion towards more conservative positions, particularly regarding policy priorities, perceptions of criminal investigations into Donald Trump and views on the war in Ukraine. In contrast, switching from the algorithmic to the chronological feed had no comparable effects. Neither switching the algorithm on nor switching it off significantly affected affective polarization or self-reported partisanship. […]

One can be pedantic about the use of “algorithmic” and “the algorithm” to describe a particular set of rules for recommending tweets, given that you could also say a reverse-chronological timeline is its own kind of algorithm. A simple one, to be sure, but an algorithm. I will not quibble with this.

Here is one thing I will be pedantic about, though: this study is not an examination of the “political effects of X’s feed algorithm”, as the title of the study suggests. It was conducted in 2023 — just a little bit after Elon Musk bought the platform and when it was still named Twitter. That is a long time ago in online platform terms, and the recommendations engine has probably changed a lot since — but almost certainly not in the direction of political even-handedness — even though the GitHub commit log suggests it has not been.

This study’s design seems better to me than a report published shortly after the 2024 U.S. presidential election, which I found limited and unconvincing.

There should always be a way for users to set a reverse-chronological timeline, and to opt out of recommendations features. We should be suspicious of any platform that refuses to trust us with control over our own experience.

It Sure Looks to Me Like Meta Is Winding Down Its V.R. Efforts

Samantha Ryan, “VP of Content” at Meta’s Reality Labs:

We’ve recently made some pretty big changes, including right-sizing our Reality Labs investment to ensure that our efforts remain sustainable over time. We’ve been in this space for over a decade, and we aren’t going anywhere. We’re in it for the long haul.

By “right-sizing”, Ryan means laying off ten percent of the Reality Labs workforce, and pouring money into the Ray-Ban partnership instead of metaverse initiatives. By “in it for the long haul”, Ryan means shifting the definition of the “metaverse” to meet Mark Zuckerberg’s latest obsession. They did not whiff by renaming the entire company around a crappy update to Second Life; you just are not getting it.

Ryan:

Our goal remains constant: to empower developers and creators as they build long-term, sustainable businesses. We used to have a pretty well-defined audience for VR, but as we’ve grown, we’ve attracted new audiences — who want different things — and the onus is on us to make sure that each of these distinct groups can find the apps and games that appeal to them.

That’s why we’re changing our roadmaps to increase your chances for success. We’re explicitly separating our Quest VR platform from our Worlds platform in order to create more space for both products to grow. We’re doubling down on the VR developer ecosystem while shifting the focus of Worlds to be almost exclusively mobile. By breaking things down into two distinct platforms, we’ll be better able to clearly focus on each.

Meta can say it is “doubling down on the V.R. developer ecosystem” all it wants, but it announced in January it would be shutting down its work-focused V.R. app with only a month’s notice, and it has cancelled third-party headsets. Now, it is saying Horizon Worlds is basically a phone app. Last February, Andrew Bosworth wrote in a memo about the importance of this very strategy:

[…] And Horizon Worlds on mobile absolutely has to break out for our long term plans to have a chance. […]

As I write this, Meta Horizon is the fifty-seventh most popular free game in the Canadian App Store, just two spots behind Hole.io, “the most addictive black hole game”. Maybe people do not, in general, want to wear a computer on their entire head — not for the thousands of dollars Apple is charging, and not for the hundreds Meta is.

Personal (Computer) Assistants

For years, I’ve wanted a personal assistant. Someone who knows my preferences, manages my inbox, tracks my packages, and helps my family stay organized. The problem? Good assistants are expensive, require training, and still need constant direction.

So I built one. His name is Lobster. 🦞

The key insight that made this work wasn’t technical—it was conceptual. I stopped thinking “AI chatbot” and started thinking “new hire.”

I think this analogy is downright perfect.

When I first read this piece, my mind started to spin with all the things I could offload to my own digital personal assistant. Imagine how much time I could save by… wait. What could I use it for? Shahine says it helps summarize recent emails, figure out travel details, find event tickets, and more, all through iMessage conversations. This is a remarkable technical achievement. But what it drove home for me is how little I could ultimately relate to the scenarios presented by Shahine, even as I am trying to plan dinner with friends and a couple of trips later in the year.

Perhaps the same is true for you, too. Take a moment and think about what tasks you would give a personal assistant that can only work through software. Is it a long list? Is delegating checking your email saving you time? If you automate your vacation planning, does it make you happier than figuring that out alongside your partner or family? I am not saying Shahine is wrong or misguided. I just cannot see my life in this, and I do not think I am alone.

The story of one of my worst programming failures

Somewhat recently, GeePaw Hill shared the story of what he called his most humiliating experience as a skilled and successful computer programmer. It's an excellent, entertaining story with a lesson for all of us, so I urge you to read it. Today I'm going to tell the story of one of my great failures, where I may have quietly killed part of a professor's research project by developing on a too-small machine.

Once upon a time, back when I was an (advanced) undergraduate, I was hired as a part time research programmer for a Systems professor to work on one of their projects, at first with a new graduate student and then later alone (partly because the graduate student switched from Systems to HCI). One of this professor's research areas was understanding and analyzing disk IO patterns (a significant research area at the time), and my work was to add detailed IO tracing to the Ultrix kernel. Some of this was porting work the professor had done with the 4.x BSD kernel (while a graduate student and postdoc) into the closely related, BSD-derived Ultrix kernel, but we extended the original filesystem level tracing down all the way to capturing block IO traces (still specifically attributed to filesystem events).

We were working on Ultrix because my professor had a research and equipment grant from DEC. DEC was interested in this sort of information for improving the IO performance of the Ultrix kernel, and part of the benefit of working with DEC was that DEC could arrange for us to get IO traces from real customers with real workloads, instead of university research system workloads. Eventually the modified kernel worked, gathered all the data that we wanted (and gave us some insights even on our systems), and was ready for the customer site. We talked to DEC and it was decided that the best approach was that I would go down to Boston with the source code, meet with the DEC people involved, we'd build a kernel for the customer's setup, and then I'd go with the DEC people to the customer site to actually boot into it and turn the tracing on.

Very shortly after we booted the new kernel on the customer's machine and turned tracing on, the kernel paniced. It was a nice, clear panic message from my own code, basically an assertion failure, and what it said was more or less 'disk block number too large to fit into data field'. I looked at that and had a terrible sinking feeling.

This was long enough ago (with small enough disks) that having very compact trace data was extremely important, especially at the block IO layer (where we were generating a lot of trace records). As a result, I'd carefully designed the on-disk trace records to be as small as possible. As part of that I'd tried to cut down the size of fields to be only as big as necessary, and one of the fields I'd minimized was the disk block address of the IO. My minimized field was big enough for the block addresses on our Ultrix machines (donated by DEC), with not very big disks, but it was now obviously too small for the bigger disks that the company had bought from DEC for their servers. In a way I was lucky that I'd taken the precaution of putting in the size check that paniced, because otherwise we could have happily wasted time collecting corrupted traces with truncated block addresses.

(All of this was long enough ago that I can't remember how small the field was, although my mind wants to say 24 bits. If it was 24 bits, I had to be using 4 Kbyte filesystem block addresses, not 512-byte sector addresses.)

Once I saw the panic message, both the mistake and the fix were obvious, and the code and so on were well structured enough that it was simple to make the change; I could almost have done it on the spot (or at least while in Boston). But, well, you only get one kernel panic from your new "we assure you this is going to work" kernel on a customer's machine, especially if you only have one evening to gather your trace data and you can't rebuild a kernel from source while at the customer's site, so the DEC people and I had to pack up and go back empty handed. Afterward, I flew back to Toronto from Boston, made the simple change, and tested everything. But I never went back to Boston for another visit with DEC, and I don't think that part of my professor's research projects went anywhere much after that.

(My visit to Boston and its areas did feature getting driven around at somewhat unnervingly fast speeds on the Massachusetts Turnpike in the sports car of one of the DEC people involved.)

So that's the story of how I may have quietly killed one of my professor's research projects by developing on a too-small machine.

(That's obviously not the only problem. When I was picking the field size, I could have reached out somehow to ask how big DEC's disks got, or maybe ran the field size past my professor to see if it made sense. But I was working alone and being trusted with all of this, and I was an undergraduate, although I had significant professional programming experience by then.)

Sidebar: Fixing an earlier spectacular failure

(All of the following is based on my fallible memory.)

The tracing code worked by adding trace records to a buffer in memory and then writing out the buffer to the trace file when it was necessary. The BSD version of the code that I started with (which traced only filesystem level IO) did this synchronously, created trace records even for writing out the trace buffer, and didn't protect itself against being called again. A recursive call would deadlock but usually it all worked because you didn't add too many new trace records while writing out the buffer.

(Basically, everything that added a trace record to the buffer checked to see if the buffer was too full and if it was, immediately called the 'flush the trace buffer' code.)

This approach blew up spectacularly when I added block IO tracing; the much higher volume of records being added made deadlocks relatively common. The whole approach to writing out the trace buffer had to change completely, into a much more complex one with multiple processes involved and genuinely asynchronous writeout. I still have a vivid memory of making this relatively significant restructuring and then doing a RCS ci with a commit message that included a long, then current computing quote about replacing one set of code with known bugs with a new set of code with new unknown ones.

(At this remove I have no idea what the exact quote was and I can't find it in a quick online search. And unfortunately the code and its RCS history is long since gone.)

Power glitches can leave computer hardware in weird states

Late Friday night, the university's downtown campus experienced some sort of power glitch or power event. A few machines rebooted, a number of machines dropped out of contact for a bit (which probably indicates some switches restarting), and most significantly, some of our switches wound up in a weird, non-working state despite being powered on. This morning we cured the situation by fully power cycling all of them.

This isn't the first time we've seen brief power glitches leave things in unusual states. In the past we've seen it with servers, with BMCs (IPMIs), and with switches. It's usually not every machine, either; some machines won't notice and some will. When we were having semi-regular power glitches, there were definitely some models of server that were more prone to problems than others, but even among those models it usually wasn't universal.

It's fun to speculate about reasons why some particular servers of a susceptible model would survive and others not, but that's somewhat beside today's point, which is that power glitches can get your hardware into weird states (and your hardware isn't broken when and because this happens; it can happen to hardware that's in perfectly good order). We'd like to think that the computers around us are binary, either shut off entirely or working properly, but that clearly isn't the case. A power glitch like this peels back the comforting illusion to show us the unhappy analog truth underneath. Modern computers do a lot of work to protect themselves from such analog problems, but obviously it doesn't always work completely.

(My wild speculation is that the power glitch has shifted at least part of the overall system into a state that's normally impossible, and either this can't be recovered from or the rest of the system doesn't realize that it has to take steps to recover, for example forcing a full restart. See also flea power, where a powered off system still retains some power, and sometimes this matters.)

PS: We've also had a few cases where power cycling the hardware wasn't enough, which is almost certainly flea power at work.

PPS: My steadily increasing awareness of the fundamentally analog nature of a lot of what I take as comfortably digital has come in part from exposure on the Fediverse to people who deal with fun things like differential signaling for copper Ethernet, USB, and PCIe, and the spooky world of DDR training, where very early on your system goes to some effort to work out the signal characteristics of your particular motherboard, RAM, and so on so that it can run the RAM as fast as possible (cf).

(Never mind all of the CPU errata about unusual situations that aren't quite handled properly.)

If there are URLs in your HTTP User-Agent, they should exist and work

One of the things people put in their HTTP User-Agent header for non-browser software is a URL for their software, project, or whatever (I'm all for this). This is a a good thing, because it allows people operating web servers to check out who and what you are and decide for themselves if they're going to allow it. Increasingly (and partly for social reasons), I block many 'generic' User-Agent values that come to my attention, for example through their volume.

(I don't block all of them, but if your User-Agent shows up and I can't figure out what it is and whether or not it's legitimate and used by real people, that's probably a block.)

However, there's an important and obvious thing about any URLs in your HTTP User-Agent, which is that they should actually work. The domain or host should exist, the URL should exist in the web server, and the URL's contents should actually explain the software, project, or organization involved. Plus, if you use a HTTPS website, the TLS certificate should be valid.

(A related thing is a generic URL that doesn't give me anything to go on. For example, your URL on a code forge, and either it's not obvious which one of your repositories is doing things or you don't have any public repositories.)

For me, a non-working URL is much more suspicious than a missing URL. HTTP User-Agents without URLs are reasonably common (especially in feed readers), so I don't find them immediately suspicious. Non-working URLs in mysterious User-Agents certainly look like you're attempting to distract me with the appearance of a proper web agent but without the reality of it. If a User-Agent with such a non-working URL comes to my attention, I'm very likely to block it in some way (unless it's very clear that it's a legitimate program used by real people, and it merely has bad habits with its User-Agent).

You would think that people wouldn't make this sort of mistake, but I regret to say that I've seen it repeatedly, in all of the variations. One interesting version I've seen is User-Agent strings with the various 'example.<TLD>' domains in their URLs. I suspect that this comes from software that has some sort of 'operator URL' setting and provides a default value if you don't set one explicitly. I've also seen .lan and .local URLs in User-Agents, which takes somewhat more creativity.

As usual, my view is that software shouldn't provide this sort of default value; instead, it should refuse to work until you configure your own value. However, this makes it slightly more annoying to use, so it will be less popular than more accommodating software. Of course, we can change that calculation by blocking everything that mentions 'example.com', 'example.org', 'example.net' and so on in its User-Agent.

Restricting IP address access to specific ports in eBPF: a sketch

The other day I covered how I think systemd's IPAddressAllow and IPAddressDeny restrictions work, which unfortunately only allows you to limit this to specific (local) ports only if you set up the sockets for those ports in a separate systemd.socket unit. Naturally this raises the question of whether there is a good, scalable way to restrict access to specific ports in eBPF that systemd (or other interested parties) could use. I think the answer is yes, so here is a sketch of how I think you'd this.

Why we care about a 'scalable' way to do this is because systemd generates and installs its eBPF programs on the fly. Since tcpdump can do this sort of cross-port matching, we could write an eBPF program that did it directly. But such a program could get complex if we were matching a bunch of things, and that complexity might make it hard to generate on the fly (or at least make it complex enough that systemd and other programs didn't want to). So we'd like a way that still allows you to generate a simple eBPF program.

Systemd uses cgroup socket SKB eBPF programs, which attach to a cgroup and filter all network packets on ingress or egress. As far as I can understand from staring at code, these are implemented by extracting the IPv4 or IPv4 address of the other side from the SKB and then querying what eBPF calls a LPM (Longest Prefix Match) map. The normal way to use an LPM map is to use the CIDR prefix length and the start of the CIDR network as the key (for individual IPv4 addresses, the prefix length is 32), and then match against them, so this is what systemd's cgroup program does. This is a nicely scalable way to handle the problem; the eBPF program itself is basically constant, and you have a couple of eBPF maps (for the allow and deny sides) that systemd populates with the relevant information from IPAddressAllow and IPAddressDeny.

However, there's nothing in eBPF that requires the keys to be just CIDR prefixes plus IP addresses. A LPM map key has to start with a 32-bit prefix, but the size of the rest of the key can vary. This means that we can make our keys be 16 bits longer and stick the port number in front of the IP address (and increase the CIDR prefix size appropriately). So to match packets to port 22 from 128.100.0.0/16, your key would be (u32) 32 for the prefix length then something like 0x00 0x16 0x80 0x64 0x00 0x00 (if I'm doing the math and understanding the structure right). When you query this LPM map, you put the appropriate port number in front of the IP address.

This does mean that each separate port with a separate set of IP address restrictions needs its own set of map entries. If you wanted a set of ports to all have a common set of restrictions, you could use a normally structured LPM map and a second plain hash map where the keys are port numbers. Then you check the port and the IP address separately, rather than trying to combine them in one lookup. And there are more complex schemes if you need them.

Which scheme you'd use depends on how you expect port based access restrictions to be used. Do you expect several different ports, each with its own set of IP access restrictions (or only one port)? Then my first scheme is only a minor change from systemd's current setup, and it's easy to extend it to general IP address controls as well (just use a port number of zero to mean 'this applies to all ports'). If you expect sets of ports to all use a common set of IP access controls, or several sets of ports with different restrictions for each set, then you might want a scheme with more maps.

(In theory you could write this eBPF program and set up these maps yourself, then use systemd resource control features to attach them to your .service unit. In practice, at that point you probably should write host firewall rules instead, it's likely to be simpler. But see this blog post and the related VCS repository, although that uses a more hard-coded approach.)

Your terminal program has to be where xterm's ziconbeep feature is handled

I recently wrote about things that make me so attached to xterm. One of those things is xterm's ziconbeep feature, which causes xterm to visibly and perhaps audibly react when it's iconified or minimized and gets output. A commentator suggested that this feature should ideally be done in the window manager, where it could be more general. Unfortunately we can't do the equivalent of ziconbeep in the window manager, or at least we can't do all of it.

A window manager can sound an audible alert when a specific type of window changes its title in a certain way. This would give us the 'beep' part of ziconbeep in a general way, although we're treading toward a programmable window manager. But then, Gnome Shell now does a lot of stuff in JavaScript and its extensions are written in JS and the whole thing doesn't usually blow up. So we've got prior art for writing an extension that reacts to window title changes and does stuff.

What the window manager can't really do is reliably detect when the window has new output, in order to trigger any beeping and change the visible window title. As far as I know, neither X nor Wayland give you particularly good visibility into whether the program is rendering things, and in some ways of building GUIs, you're always drawing things. In theory, a program might opt to detect that it's been minimized and isn't visible and so not render any updates at all (although it will be tracking what to draw for when it's not minimized), but in practice I think this is unfashionable because it gets in the way of various sorts of live previews of minimized windows (where you want the window's drawing surface to reflect its current state).

Another limitation of this as a general window manager feature is that the window manager doesn't know what changes in the appearance of a window are semantically meaningful and which ones are happening because, for example, you just changed some font preference and the program is picking up on that. Only the program itself knows what's semantically meaningful enough to signal for people's attention. A terminal program can have a simple definition but other programs don't necessarily; your mail client might decide that only certain sorts of new email should trigger a discreet 'pay attention to me' marker.

(Even in a terminal program you might want more control over this than xterm gives you. For example, you might want the terminal program to not trigger 'zicon' stuff for text output but instead to do it when the running program finishes and you return to the shell prompt. This is best done by being able to signal the terminal program through escape sequences.)

How I think systemd IP address restrictions on socket units works

Among the systemd resource controls

are IPAddressAllow= and IPAddressDeny=,

which allow you to limit what IP addresses your systemd thing can

interact with. This is implemented with eBPF.

A limitation of these as applied to systemd .service units is that

they restrict all traffic, both inbound connections and things your

service initiates (like, say, DNS lookups), while you may want

only a simple inbound connection filter.

However, you can also set these on systemd.socket

units. If you do, your IP address restrictions apply only to the socket (or

sockets), not to the service unit that it starts. To quote the

documentation:

Note that for socket-activated services, the IP access list configured on the socket unit applies to all sockets associated with it directly, but not to any sockets created by the ultimately activated services for it.

So if you have a systemd socket activated service, you can control who can access the socket without restricting who the service itself can talk to.

In general, systemd IP access controls are done through eBPF programs set up on cgroups. If you set up IP access controls on a socket, such as ssh.socket in Ubuntu 24.04, you do get such eBPF programs attached to the ssh.socket cgroup (and there is a ssh.socket cgroup, perhaps because of the eBPF programs):

# pwd /sys/fs/cgroup/system.slice # bpftool cgroup list ssh.socket ID AttachType AttachFlags Name 12 cgroup_inet_ingress multi sd_fw_ingress 11 cgroup_inet_egress multi sd_fw_egress

However, if you look there are no processes or threads in the ssh.socket cgroup, which is not really surprising but also means there is nothing there for these eBPF programs to apply to. And if you dump the eBPF program itself (with 'ebpftool dump xlated id 12'), it doesn't really look like it checks for the port number.

What I think must be going on is that the eBPF filtering program

is connected to the SSH socket itself. Since I can't find any

relevant looking uses in the systemd code of the `SO_ATTACH_*'

BPF related options from socket(7) (which

would be used with setsockopt(2) to

directly attach programs to a socket), I assume that what happens

is that if you create or perhaps start using a socket within a

cgroup, that socket gets tied to the cgroup and its eBPF programs,

and this attachment stays when the socket is passed to another

program in a different cgroup.

(I don't know if there's any way to see what eBPF programs are attached to a socket or a file descriptor for a socket.)

If this is what's going on, it unfortunately means that there's no way to extend this feature of socket units to get per-port IP access control in .service units. Systemd isn't writing special eBPF filter programs for socket units that only apply to those exact ports, which you could in theory reuse for a service unit; instead, it's arranging to connect (only) specific sockets to its general, broad IP access control eBPF programs. Programs that make their own listening sockets won't be doing anything to get eBPF programs attached to them (and only them), so we're out of luck.

(One could experiment with relocating programs between cgroups, with the initial cgroup in which the program creates its listening sockets restricted and the other not, but I will leave that up to interested parties.)

Sometimes, non-general solutions are the right answer

I have a Python program that calculates and prints various pieces of Linux memory information on a per-cgroup basis. In the beginning, its life was simple; cgroups had a total memory use that was split between 'user' and '(filesystem) cache', so the program only needed to display either one field or a primary field plus a secondary field. Then I discovered that there was additional important (ie, large) kernel memory use in cgroups and added the ability to report it as an additional option for the secondary field. However, this wasn't really ideal, because now I had a three-way split and I might want to see all three things at once.

A while back I wrote up my realization about flexible string formatting with named arguments. This sparked all sorts of thoughts about writing a general solution for my program that could show any number of fields. Recently I took a stab at implementing this and rapidly ran into problems figuring out how I wanted to do it. I had multiple things that could be calculated and presented, I had to print not just the values but also a header with the right field names, I'd need to think about how I structured argparse argument groups in light of argparse not supporting nested groups, and so on. At a minimum this wasn't going to be a quick change; I was looking at significantly rewriting how the program printed its output.

The other day, I had an obvious realization: while it would be nice to have a fully general solution that could print any number of additional fields, which would meet my needs now and in the future, all that I needed right now was an additional three-field version with the extra fields hard-coded and the whole thing selected through a new command line argument. And this command line argument could drop right into the existing argparse exclusive group for choosing the second field, even though this feels inelegant.

(The fields I want to show are added with '-c' and '-k' respectively in the two field display, so the morally correct way to select both at once would be '-ck', but currently they're exclusive options, which is enforced by argparse. So I added a third option, literally '-b' for 'both'.)

Actually implementing this hard-coded version was a bit annoying for structural reasons, but I put the whole thing together in not very long; certainly it was much faster than a careful redesign and rewrite (in an output pattern I haven't used before, no less). It's not necessarily the right answer for the long term, but it's definitely the right answer for now (and I'm glad I talked myself into doing it).

(I'm definitely tempted to go back and restructure the whole output reporting to be general. But now there's no rush to it; it's not blocking a feature I want, it's a cleanup.)

A taxonomy of text output (from tools that want to be too clever)

One of my long standing gripes with Debian and Ubuntu is, well, I'll quote myself on the Fediverse:

I understand that Debian wants me to use 'apt' instead of apt-get, but the big reason I don't want to is because you can't turn off that progress bar at the bottom of your screen (or at least if you can it's not documented). That curses progress bar is something that I absolutely don't want (and it would make some of our tooling explode, yes we have tooling around apt-get).

Over time, I've developed opinions on what I want to see tools do for progress reports and other text output, and what I feel is increasingly too clever in tools that makes them more and more inconvenient for me. Today I'm going to try to run down that taxonomy, from best to worst.

- Line by line output in plain text with no colours.

- Represent progress by printing successive dots (or other characters) on

the line until finally you print a newline. This is easy to capture and

process later, since the end result is a newline terminated line with

no control characters.

- Reporting progress by printing dots (or other characters) and then

backspacing over them to erase them later. Pagers like

lesshave some ability to handle backspaces, but this will give you heartburn in your own programs. - Reporting progress by repeatedly printing a line, backspacing over

it, and reprinting it (as apt-get does). This produces a lot more

output, but I think

lessand anything that already deals with backspacing over things will generally be able to handle this. I believe apt-get does this. - Any sort of line output with colours (which don't work in my environment,

and when they do work they're usually unreadable). Any sort of

terminal codes in the output make it complicated to capture the

output with tools like

scriptand then look over them later with pagers likeless, althoughlesscan process a limited amount of terminal codes, including colours. - Progress bar animation on one line with cursor controls and other

special characters. This looks appealing but generates a lot more

output and is increasingly hard for programs like

lessto display, search, or analyze and process. However, your terminal program of choice is probably still going to see this as line by line output and preserve various aspects of scrollback and so on. - Progress output that moves the cursor and the output from its

normal line to elsewhere on screen, such as at the bottom (as 'apt

autoremove' and other bits of 'apt' do). Now you have a full

screen program; viewing, reconstructing, and searching its output

later is extremely difficult, and its output will blow up

increasingly spectacularly if it's wrong about your window size

(including if you resize things while it's running) or what

terminal sequences your window responds to. Terminal programs

and terminal environments such as tmux or screen may well throw

up their hands at doing anything smart with the output, since

you look much like a full screen editor, a pager, or programs

like

top. In some environments this may damage or destroy terminal scrollback.An additional reason I dislike this style is that it causes output to not appear at the current line. When I run your command line program, I want your program to print its output right below where I started it, in order, because that's what everything else does. I don't want the output jumping around the screen to random other locations. The only programs I accept that from are genuine full screen programs like

top. Programs that insist on displaying things at random places on the screen are not really command line programs, they are TUIs cosplaying being CLIs. - Actual full screen output, as a text UI, with the program clearing the screen and printing status reports all over the place. Fortunately I don't think I've seen any 'command line' programs do this; anything that does tends to be clearly labeled as a TUI program, and people mostly don't provide TUIs for command line tools (partly because it's usually more work).

My strong system administrator's opinion is that if you're tempted

to do any of these other than the first, you should provide a command

line switch to turn these off. Also, you should detect unusual

settings of the $TERM environment variable, like 'dumb' or perhaps

'vt100', and automatically disable your smart output. And you should

definitely disable your smart output if $TERM isn't set or you're

not outputting to a (pseudo-)terminal.

(Programs that insist on fancy output no matter what make me very unhappy.)

Log messages are mostly for the people operating your software

I recently read Evan Hahn's The two kinds of error (via), which talks very briefly in passing about logging, and it sparked a thought. I've previously written my system administrator's view of what an error log level should mean, but that entry leaves out something fundamental about log messages, which is that under most circumstances, log messages are for the people operating your software (I've sort of said this before in a different context). When you're about to add a non-debug log message, one of the questions you should ask is what does someone running your program get out of seeing the message.

Speaking from my own experience, it's very easy to write log messages (and other messages) that are aimed at you, the person developing the program, script, or what have you. They're useful for debugging and for keeping track of the state of the program, and it's natural to write them that way since you're immersed in the program and have all of the context (this is especially a problem for infrequent error messages, which I've learned to make as verbose as possible, and a similar thing applies for infrequently logged messages). But if your software is successful (especially if it gets distributed to other people), most of the people running it won't be the developers, they'll only be operating it.

(This can include a future version of you when you haven't touched this piece of software for months.)

If you want your log messages to be useful for anything other than being mailed to you as part of a 'can you diagnose this' message, they need to be useful for the people operating the software. This doesn't mean 'only report errors that they can fix and need to', although that's part of it. It also means making the information you provide through logs be things that are useful and meaningful to people operating your software, and that they can understand without a magic decoder ring.