voice modems

If you've done much with modern cellphones, you've probably noticed just how odd the architecture can be around audio. Specifically, I mean call audio: modern smartphones have made call audio less of a special case (mostly by just becoming more complicated in general), but in older phones you would often find arrangements where the cellular modem 1 had direct analog audio to the microphone and speaker, perhaps via some switching to share amplifiers. That design meant that the cellular modem functioned basically as a completely independent device, a fully-capable "cellular phone" with the ability to make and receive voice calls. The role of the rest of the smartphone, and its operating system, was just to provide control messages for starting and ending calls.

In modern phones the audio path to and from the modem is digital and it's more integrated into the operating system audio service, but still not fully. You might have noticed, for example, that it is excessively difficult to record call audio on most phones. Regulatory and liability pressures are one reason for this, but another is that it's actually kind of difficult: there may not be any physical way for software running on the main processor to receive audio from the cellular modem. The designer has to put in explicit effort to make that work, effort that only became common more recently to facilitate automatic transcription—and VoLTE, a whole complication that I will simply ignore for the sake of a cleaner historical narrative. You come here to read about old phones, not new ones.

You've probably read enough of my writing to know where this is going: the design of cellular radios, which assume call audio to be part of Their Exclusive Domain, is a legacy of an age-old architectural decision traceable to the original Hayes Smartmodem. It relates to a feature of modems that was widely available, but sparsely used, for much of the PC revolution. The details are odd!

First, for context, let's recede into our mind palaces and travel back to the 1980s. AT&T-designed modems like the Bell 103 had created a standardized family of protocols for data over voice lines, and a company called Hayes introduced a Bell 103-like implementation called the Smartmodem. The Smartmodem was quite successful on its own, but it was more significant for having introduced a common control interface between the modem and the computer. Previous modems had acted as transparent devices that expected Something Else to perform call setup tasks, while the Hayes Smartmodem could pick up the line and dial all on its own. That required that the computer send commands to the modem to configure and start a call.

Hayes designed a simple scheme for sending commands to the modem and switching it in and out of transparent data mode, and that protocol was then widely copied by other modem manufacturers. You could call it the "Hayes command set," and older documents often do, but these days it's more commonly known by the two characters that prefix most commands: the AT protocol.

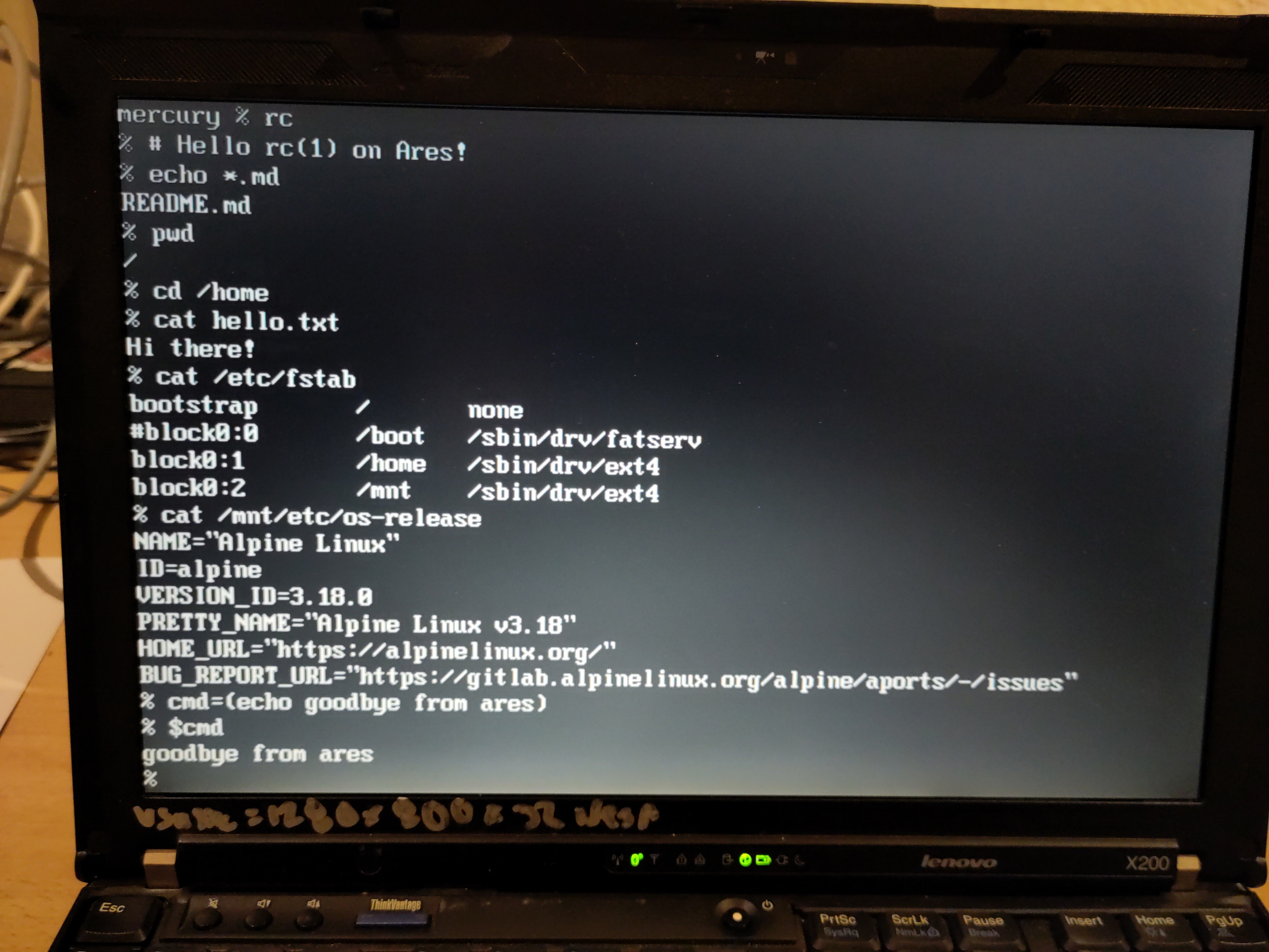

From its origin in 1981, AT has shown remarkable staying power. Virtually all computer-connected modems, to this very day, continue to use AT commands for basic configuration. Likewise, the basic architecture of the Smartmodem persists: the Smartmodem connected to the host computer using a single RS-232 link that switched between carrying control messages and data. The very latest 5G modems still work the same way, complicated by the addition of multiple separate UART serial channels (so that, for example, control commands, data, and GNSS data can each have their own separate channel) and the adoption of the USB communications device class "Abstract Control Model," a standard UART-over-USB implementation mostly intended to simplify modems. Plug a modern 5G modem into a Linux machine and you can easily observe this: virtually all cellular modems are USB-attached and will appear as a USB composite device with multiple serial adapters, usually attached as /dev/ttyACM* due to the USB-CDC ACM class.

Courtesy of the V.250 standard (a formalization of AT commands) and considerable effort by driver implementers, USB-attached modems "Just Work" as network interfaces on modern Linux—but under the hood, the kernel is communicating with the modem over separate serial interfaces. Back in the olden days, it was common to run PPP (point-to-point protocol) over one of the serial interfaces to use the actual data (bearer) channel, but now PPP has mostly given way to "Direct IP" where you just push packets over the serial link.

Just to complicate things a touch more, there are vendor-specific standards like QMI (Qualcomm) that completely replace AT and find use in modern smartphones, but they're messy with regards to Linux support. If you are personally interacting at this layer, messing with modems or writing communications software or whatever, you are almost certainly going to stick to AT commands. Modem vendors continue to build on AT. If you look at LTE modems made for IoT applications, for example, it's common for them to provide a complete HTTP implementation (and sometimes MQTT, and sometimes some kind of proprietary message broker protocol) accessible via AT commands. That means you can implement an IoT device without a network stack at all, deferring all network operations to the modem itself. With a JSON-over-HTTP backend, for example, you might send AT commands with JSON payloads over the serial control channel and then get JSON back. You never interact with the network at all, the modem is a completely self-contained system. At the extreme, you might implement your entire device using exclusively the modem. This is a common approach for telematics devices like GPS trackers: they consist of nothing but a cellular modem, the telemetry application is built for the modem using an SDK from its vendor, and you interact with it using AT commands. IoT-class modems frequently provide GPIO and user flash for just this purpose.

None of that is actually what this article is about, but I want to make clear how profound the implications of the Smartmodem heritage are. In 1981, the Smartmodem was a standalone device controlled over serial because the limitations of the era's computer made that a practical necessity. Processors weren't fast enough to run the modem DSP alongside other workloads, certification requirements for telephone-connected devices were stricter, etc. Despite the late-'90s detour into "winmodems," most of those constraints still exist, just in the different forms of the cellular network. Today's modems are less v.54 and more 5G, but they still act as standalone devices controlled over serial channels.

Most telephone modems of the 1980s were exclusively data modems. You could use AT commands to make a call, switch into data mode, and then you basically had a very long serial cable from your device to the computer on the other end of the call. That was all these modems did; their only interaction with "The Telephone System" besides as a pair of wires was for basic call control like detecting dial tone and sending DTMF dialing. That was quite natural considering their evolution from acoustic coupler modems (where you dialed the phone yourself and then set the handset on the modem), but by the late '80s, as devices like the Smartmodem with their own call control were common, it started to feel primitive. With Carterfone and the breakup of the AT&T monopoly, computers were starting to feel like first-class citizens on the telephone system. Shouldn't they have more complete support for, well, telephone things?

From a modern perspective, it might seem odd that fax came to modems before voice, but it makes technical sense. Fax machines use a digital protocol that is loosely derived from Bell 103 and belongs to the same extended family as other telephone modems, so modems already had the hardware. Implementing fax support was just a matter of software. With some extensions to the AT command set, your computer became a fax machine. By the late 1980s, fax support was common in modems, usually distinguished by marketing the modem as "data/fax."

For example, the command AT+FCLASS=1.0 changed the modem to T.31 fax mode (fax class 1.0). T.31/EIA-578 is a standard for sending and receiving faxes using a serial connection to a telephone modem, and it was widely implemented by commercial software packages. There were so many "PC fax" packages available in the 1990s that you could stock half an aisle of an OfficeMax with them, and indeed that's what happened. The legacy of this industry is that there are still dozens of "fax server" products built around data/fax modems, like the open source Hylafax.

Fax modems also made a more general contribution to the modem state of the art: the concept of distinct modes. "Fax class 0" was data mode, while values like 1 and 2 and, oddly, 1.0 and 2.0 were used for different fax implementations. There was an obvious, and tantalizing, opportunity: more modes. Maybe, even, a modem mode for that most classic application of the telephone: voice. Could you use your computer for telephone calls?

The idea is obvious, so it's no surprise that several vendors were working on it all at once. Early efforts at telephone-on-computer could be quite comical, consisting of a telephone that was more or less glued to a computer, no electrical connectivity between them. The IBM Palm Top PC 110 is my favorite example of this form, a Japanese-market miniature laptop with a speaker and microphone on the front edge so that you could hold it up to your face to make a call. Besides amusement, it illustrates a fundamental challenge of merging computers with telephony: real-time media is hard.

It seems very funny to build a telephone into a computer because computers are general-purpose devices defined by software. Putting a phone in the computer should not mean physically putting a phone in the computer; the phone should obviously be a software application. Well, obviously from our modern perspective, but real-time media has always been difficult for computers (which, for architectural reasons, are mostly seen today as fundamentally asynchronous, non-real-time devices). Modern computers get away with it by brute force; they're just so fast that they can be wildly inefficient with media and still keep up to real-time. But things were different in the 1990s. Real-time audio processing was a fairly demanding application and most of the computer industry preferred to leave it to hardware.

Still, the voice modem was an inevitability. In 1991, the Los Angeles Times reported that at least three companies were working on some form of "modem with voice support" for 1992. They focused mainly on Rockwell International, which proved the right call. We don't remember Rockwell as a semiconductor company today, but in the 1990s they very much were—Rockwell Semiconductor later spun out into Conexant, now part of Synaptics. At the time, Rockwell was a major player in semiconductors, especially for communications.

Rockwell had particular expertise in answering telephones. During the 1970s, the Rockwell Galaxy Automatic Call Distributor just about invented the modern call center. It was the first digitally-controlled system that answered calls on a pool of telephone lines, placed them on hold, and distributed them to a pool of operators. The flexibility and efficiency of Rockwell's computer-controlled system, which was specifically designed to cut costs by presenting calls to operators as rapidly as possible, displaced AT&T's contact center systems (like turrets) and Rockwell had almost complete dominance in the new world of 1-800 customer call centers during the 1980s.

Rockwell did not manufacture complete modems, but instead chipsets that were integrated into modems by other manufacturers. That makes it a little tricky to figure out the first model that Rockwell shipped with voice support, but it was sometime in 1992. Rockwell's chips quickly lead to a generation of "data/fax/voice" modems from all the usual manufacturers. Despite competition from other chipset vendors like Cirrus, Rockwell's voice modems became the Hayes of voice. By the mid-'90s, data/fax/voice modems were widespread and the majority either used a Rockwell chipset or a chipset that matched Rockwell's control protocol.

Let's talk a bit about that protocol, and how voice modems actually worked. Although the v.250 standard for many AT commands was in place, there was no standard for voice control. To oversimplify a bit, the "core" AT commands are generally AT followed by one or two letters. "AT+" came to be used as a prefix for standardized "extension" commands, like those added for fax support. Manufacturer-specific extension commands used AT followed by some other character, and Rockwell chose AT#.

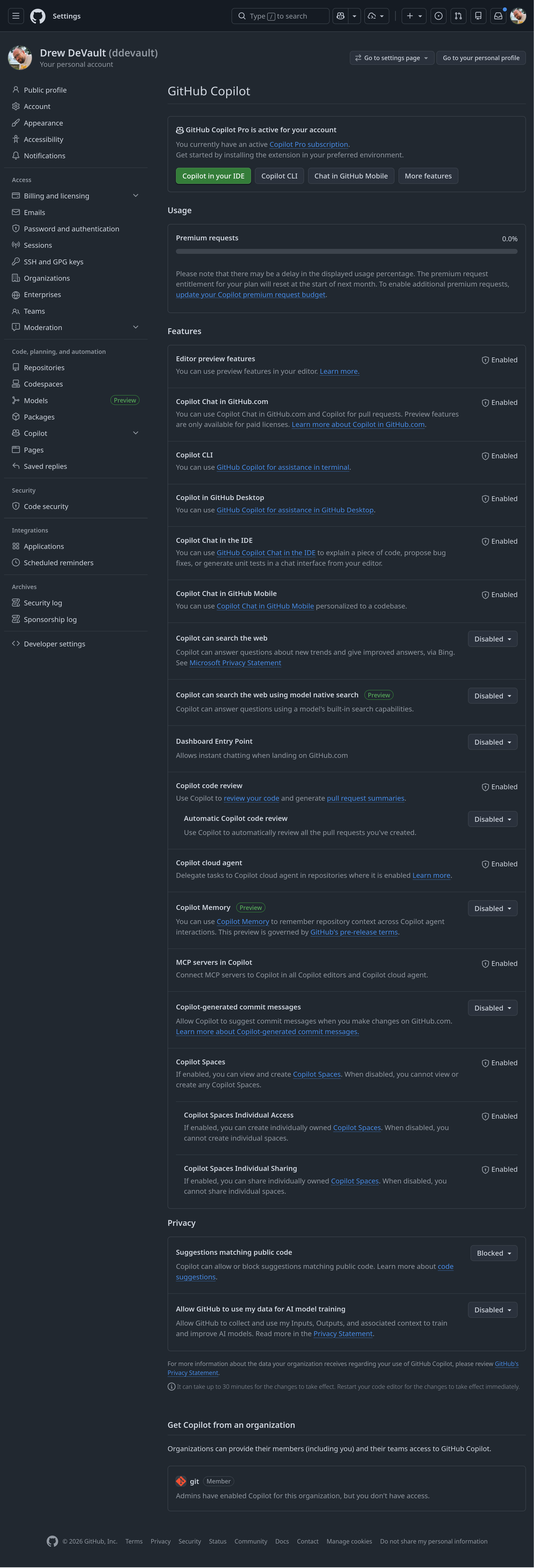

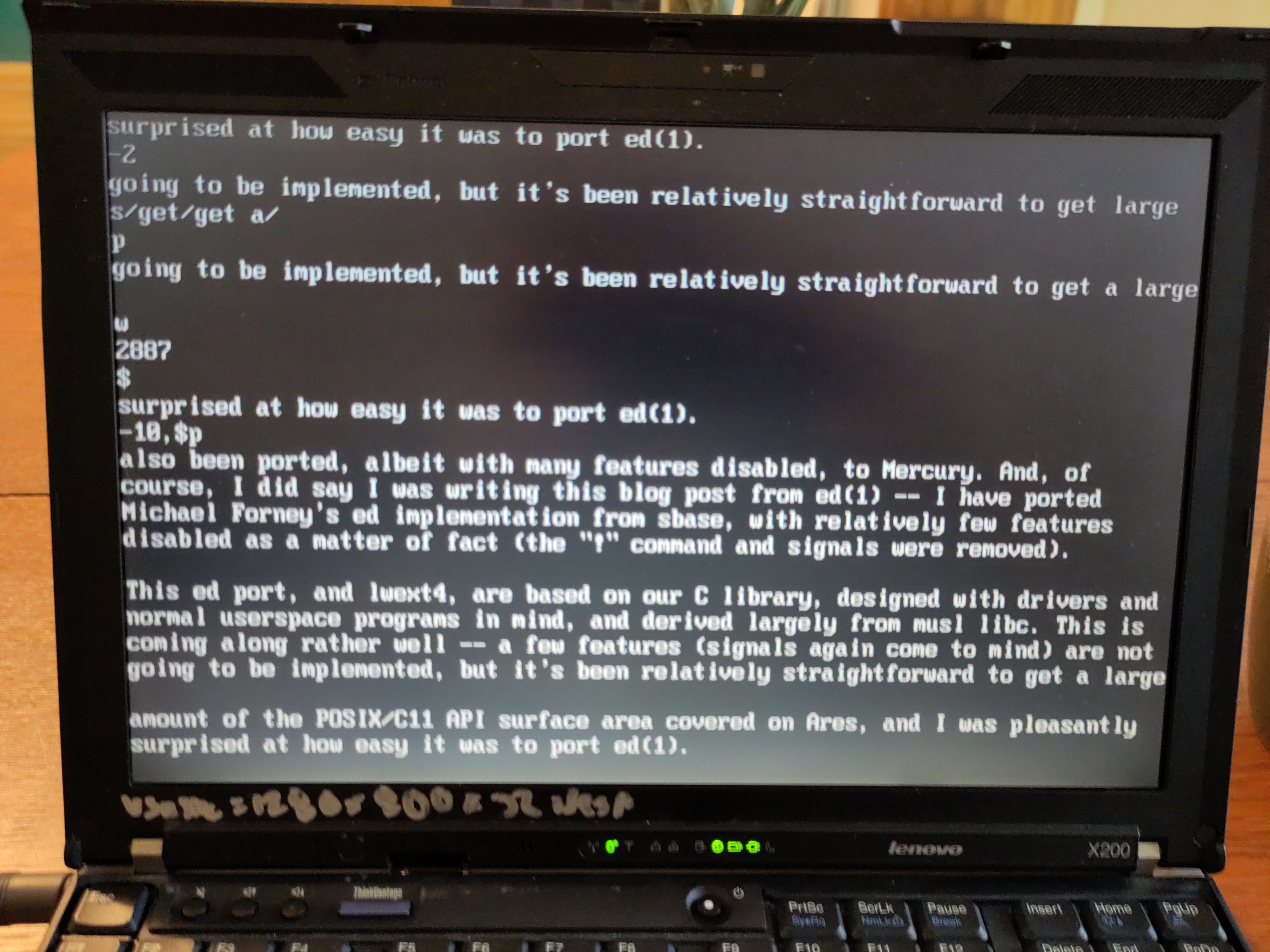

To start, voice mode was presented as just another case of fax classes. Specifically, Rockwell voice mode is fax class 8, so to put a modem into voice mode you sent AT#CLS=8 (it seems like the properly standardized AT+FCLASS=8 also worked on many later chipsets, but I'm not sure about the early examples) to enter Fax Class 8. In most cases, you will also want to use the commands AT+VLS=?, which retrieves the modem's voice feature support, and AT+VSM=?, which returns the list of audio codecs supported by the modem.

Once in voice mode, you can use a set of voice-specific AT# commands to dial an outgoing call or answer an incoming one. The modem provides messages about call state, so once the call is up, you can issue the command AT#VTX to begin transmitting voice. This puts the modem into a mode much like a data or fax connection, in which everything sent over the serial connection is interpreted as audio data to be played onto the telephone line.

This gets into one of the ugly details of voice modems. Early voice modems had no audio connection to the computer, only serial, so audio data had to be sent over the serial connection. In keeping with telephony conventions, 8-bit PCM was widely supported, but practically required a fast 115200 baud serial connection. That's basically the limit for RS-232 and it was desirable to provide lower-bandwidth options, which mostly appeared as a proprietary form of ADPCM (adaptive differential PCM).

During transmission of voice data, the modem would indicate events ranging from hang-up to received DTMF digits using escape codes prefixed with DLE (ASCII 0x10). Similarly, the computer could send the modem an escape code to indicate the end of voice data. Things worked much the same in reverse: AT#VRX put the modem in recording mode, and the modem sent audio data back to the computer over the same serial connection. The computer would use an escape code to stop recording, and could also receive a number of escape codes indicating various state changes from the modem during recording.

The design of voice modems is pretty simple, just about the minimum viable product for sending and receiving voice data over a conventional telephone modem, and that's probably why it stayed. Voice support appeared in most Rockwell chipsets by 1995, and many other chipsets had a similar (but often not completely compatible) proprietary voice command set. Still, due to what I suspect may have been some ugly industry politics, it was not until 1998 that voice modem behavior was standardized by V.253. V.253 voice modem commands are mostly the same as the Rockwell proprietary scheme, but using the AT+ prefix. For example, AT+VRX and AT#VRX do the same thing in the V.253 and Rockwell command sets respectively, and in practice many post-V.253 modems seem to have just recognized both.

I suspect that Rockwell's interest in voice modems has some direct heritage to their contact center solutions, because the Rockwell voice command set provides more or less exactly what you need to build a computer-based interactive voice response (IVR) system... IVRs being a concept that Rockwell could fairly claim to have invented. And indeed, that was one of the main applications.

The voice modem almost immediately spawned a whole little industry of PC-based small office IVRs. As The Internet came into popularity, many businesses would already have a computer modem connected to their phone line for internet use, so why not let the computer answer the phone as well? Widespread addition of voice support to otherwise ordinary modems meant that some people already owned all the hardware necessary for an automatic attendant, and for those that didn't the cost of telephone modems (with voice support!) was crashing in the mid '90s.

The Internet Archive has preserved two such products from the early days of voice modems, Super Voice 2.0 from 1994 and Phone Secretary v1.01 of the same year. Phone Secretary (by Unique Software) has become quite obscure, and while there is still a company called Unique Software in the telephony market, I think they might be unrelated. Super Voice, on the other hand, made it to SuperVoice Pro 11 in 2016 and you can still buy a license today for just $99. Both of these products, and many more like them, had features like multi-mailbox voicemail and menu trees that used to require far more expensive equipment. Much of the original marketing for these products emphasizes the "Big Business" feeling of a phone tree, and Super Voice's description still says "Ideal for individuals, professionals and businesses that want to grow or appear bigger!"

Given how much people hate phone menus today, it's funny to think of them as a feature for your business's reputation, but small businesses are often trying to appear larger and having a menu of departments behind your single inbound phone line was a great way to do it. Besides, plain digital answering machines were very new in the '90s, so PC-based solutions promised more features at a similar price.

Voice modems got a big boost in 1996, when Microsoft introduced the Voice Modem Extensions for Windows 95. That package extended Windows Telephony API (TAPI) with standardized support for voice modems. TAPI fully abstracted voice modems, which proved surprisingly complex and quite important. While most all voice modems used either the Rockwell commands or a very similar protocol, the actual details of audio handling were surprisingly varied. Poor handling of audio by the computers of the era meant that a lot of external voice modems had their own headset and microphone connections, and sometimes built-in speakerphone, for voice use.

External modems almost universally used a single RS-232 interface that switched in and out of audio data mode, as described previously, but by 1996, internal (ISA or PCI) modems were starting to use a separate UART channel for payload, including audio codec data 2. Even better, some manufacturers of internal modems were starting to integrate them with audio cards on a single expansion board. This allowed the modem to "cheat" off of the sound card's ADC and DAC components, and the OS's audio stack, reducing hardware cost with the downside that TAPI had to support a bunch of different cases for how the control and audio channels related to each other, and whether the audio channel was "Rockwell style" or "Soundblaster style." And note well that V.253 wasn't yet published, so while most voice modems were at least Rockwell-adjacent there were considerable inconsistencies from chipset to chipset when it came to the fine details of escape codes and behavior. A lot of software for voice modems from the era have noncommittal language about supporting "most" voice modems, and from 1996 forward that usually meant that they supported whatever voice modems Microsoft had special-cased in TAPI.

The latter type, of integrated modem/sound cards, is particularly important as the Creative Phone Blaster and Creative Modem Blaster were popular examples of consumer data/fax/voice modems. They were indeed Sound Blasters with modems added on, usually just stereo audio out, mono in, and a telephone jack. I am not clear on the difference between the Phone Blaster and Modem Blaster product lines, and I think those might just be older and newer names (respectively) for the same lineup. They are not special, lots of manufacturers had similar products, but they are good examples of just how strange consumer voice modems became.

The appeal of the voice modem to businesses is pretty obvious, since they were a far lower cost option for business phone systems. Unlike many telephony products, though, computer modems of the 1990s had little differentiation between consumer and business or "enterprise" products. Many large modem banks ran on the exact same modem chipsets as consumers used for dial-up connections, sometimes packaged in rack-mount modem banks but sometimes just as a whole lot of external modems taped to shelves. That meant that consumer modems tended to have the feature of business systems, including the infrastructure for an IVR system.

I put a lot of time into writing this, and I hope that you enjoy reading it. If you can spare a few dollars, consider supporting me on ko-fi. You'll receive an occasional extra, subscribers-only post, and defray the costs of providing artisanal, hand-built world wide web directly from Albuquerque, New Mexico.

The way that this manifested in commerce was... odd. Take the Creative Modem Blaster DI5660, described on the box as a "56kbps internal data fax modem with voice." While not exactly bargain basement, this also wasn't special, it was a pretty standard modem for the late '90s and received revisions until at least 2000. In the manual, after some mind-numbing complexity around integration with other sound cards 3, and the customary 20 pages of screenshots of the driver installer, the manual gives a feature matrix that promises:

Able to record and play voice messages over the telephone line.

Multiple mailboxes using included communications software.

High performance speakerphone.

So, the Creative Modem Blaster is not just a modem. It's not just a sound card. It's an answering machine. Wow! Nothing else is said about voice features. The install CD includes Cheyenne Bitware for sending and receiving faxes, but I'm not sure that this modem even had voicemail software on the CD. Earlier Creative modems do seem to have shipped with a software voicemail implementation, but only a very simple one integrated into the driver. The short AT command reference on the CD doesn't mention the voice-related commands at all, and at no point in the documentation is anything about IVRs or business applications mentioned.

This situation is really common with consumer modems. Find the manuals or driver CDs from 1990s modems (the Internet Archive has tons). Flip through them, and you will often find mention of multi-box voicemail as a feature and then absolutely nothing else about voice capability. "Answering machine" was the exclusive consumer application to such an extent that some of the manufacturers, including Creative, seem to have adopted the terms "voice mail modem" or "message modem" as replacements for "voice modem."

This narrowing of vision doesn't reflect any technical limitation; these modems all supported the full Rockwell (and later V.253) standards. You can find them on compatibility lists for business IVR packages, for example. Instead, it looks like voice mail was just the only consumer application anyone could come up with. The rest of the potential of the voice modem, for complete custom applications, was complicated and difficult to support, so it was easier to just not bring it up.

This is a general problem with more advanced features in modems. Telephone modems for internet access had all these downsides, like hogging a phone line that they arguably still did not fully utilize, that manufacturers kept trying to solve. Consumers, on the other hand, seem to have simply not cared. Computers of the 1990s were relatively big, loud, costly, and unstable, so people didn't tend to leave them running 24/7. That severely cut into the practicality of a modem-based voicemail package, especially for consumers, for whom the value of a more sophisticated inbound calling experience didn't justify much extra computer maintenance.

Other inroads towards unified telephone/modem features met similar adoption challenges. Consider "modem-on-hold," a common feature of late-'90s modems that allowed for answering incoming voice calls while the modem was in use. It seemed like it solved one of the #1 complaints about telephone modems, but in practice it was tricky enough to use that hardly anyone did. Efforts like ASVD and DSVD provided actual multiplexing of Simultaneous Voice and Data (SVD, in Analog and Digital forms), meaning you could talk on the phone and exchange data at the very same time, but hardly anyone did.

There are a few reasons these didn't succeed. One is compatibility; voice modems had a rough start with the long period between the Rockwell implementation and the actual V.253 standard, but at least most modems were fairly similar to Rockwell and the incompatibilities were isolated to the computer/modem interface where they were easier to handle. Protocols like SVD required support in the modems on both ends and were seldom compatible between vendors, which became an even bigger issue as independent BBSs gave way ISP modem banks (which were fundamentally incompatible with the concepts behind SVD).

More time might have solved the compatibility problems, but consumer telephone modems were also proving to be a dead end. ISDN did not sweep away the modem by 1995 as the telcos had expected, but a few years later DSL did. DSL could do simultaneous voice and data much more seamlessly than even the most sophisticated modem SVD implementations, and with more bandwidth for both channels to boot.

To the modern computer user, the idea that a common telephone modem would casually incorporate a voicemail system is silly. Despite the obvious technical overlap, those are very different domains of consumer products. The window in which it made sense to combine them was fairly short, and the practical realities of the Windows 95 and 98 era severely limited the promised convenience. At least in the consumer realms, voice modems went pretty much nowhere.

Still, it is a technology with remarkable staying power. For example, you might assume that the late-1990s reshaping of the modem industry around thin "winmodems" (that eliminated most hardware in favor of software on the host CPU) wiped out voice support, but it didn't. By moving most of the logic to host software, winmodems actually tended to make voice features simpler to implement. V.253 voice support was quite common on winmodems.

In fact, it's still common on modems today. Consider the StarTech USB 2.0 v.92 modem, a very common choice for anyone in need of a telephone modem today. StarTech titles it as "Computer/Laptop Fax Modem" and "USB Data Modem," never quite invoking the traditional Data/Fax/Voice, but if you look at the feature list you'll find: "VOICE AND CALLER ID SUPPORT: Make and receive phone calls through your desktop / laptop computer." Despite the callout in the feature list, the spec sheet makes no mention of any voice command set. It does tell us that the modem is built around a Conexant CX93010-21Z (recall that Conexant is the descendant of Rockwell), and the Conexant reference manual for that chipset describes a voice command set that is "based on V.253."

This gets at one of the other fatal flaws of the voice modem. It's peculiar that a modem manual would specifically claim voice support and then list all of the supported data and fax modes, but not list any voice modes at all. Every single modem I've found after the mid-1990s is like this: they tell you that they have voice support, but absolutely nothing about it. You walk away with no idea how you would use a voice modem, or what a "voice modem" even is, outside of the one feature that the manufacturer may have deemed fit to ship on the CD, which is invariably voicemail.

I think this is the manifestation of an underlying problem: the compatibility and standardization problems with voice modems go beyond the fact that it took six years for Rockwell's command set to turn into an ITU standard. It doesn't appear that voice modems were ever that well standardized. Despite the wide range of voice-compatible modems on the '90s market, and the wide range of software for using them, most all of those software packages come with a manual full of warnings, caveats, limitations, and workarounds. Despite V.253, different manufacturers had different opinions about the meanings of escape codes. Several common chipsets had outright bugs, which applications had to work around. As we found today, it was often the case that understanding voice modem compatibility required identifying the underlying chipset and getting the manufacturer's command set reference manual.

Modem manufacturers, the end-user brands, seldom made their own chipsets. They were at the mercy of companies like Conexant, who seemed uninterested in further evolution of voice features. No wonder that they found it easier to just not talk about it.

For how little it ultimately mattered, voice modems got a surprising amount of technical investment from the software world. I suppose that in a world before widespread DSL and VoIP, they seemed like a solution to two problems: the disconnect between computer and telephone operation on the phone line, and the desire to use computers to automate telephone call handling. In 1997, reviewer Mark Spiwak wrote for Windows Magazine:

Thanks to the Hayes Accura 288 Internal Fax Modem with Voice, small offices seem large to outside callers. This single expansion card from a trusted modem manufacturer equips a PC with a fast modem and fax capabilities, and provides speakerphone and voice-mail functions. The modem distinguishes among incoming voice, data and fax calls, and routes them accordingly. With the modem's speaker, you don't need a sound card (although it will work with one, and a cable is included). There's also a microphone in the box.

There are ups and downs in this review. First, consider the degree of complexity hidden in the modem "distinguishing" voice, data, and fax calls. That's a longstanding hard problem in telephony that engendered a lot of hacky solutions. I think that what Hayes is referring to here is actually more of a Windows/TAPI feature than a modem feature; as part of TAPI Microsoft shipped something called the Microsoft Operator Agent that would answer phone calls on a voice-capable modem, detect modem tones or prompt the user to select a call mode as an IVR menu, and then invoke the specified application to handle the rest of the call. There was a whole generalized, registry-based routing mechanism to allow multiple applications to accept phone calls based on caller intent. It feels like a rather sophisticated piece of operating system functionality to have almost completely withered away.

On the other hand, there's "a microphone in the box" and the promise that the modem will work with a sound card even if one isn't required. These are hints at the kind of complexity that a typical consumer would have found fairly impenetrable, the ugly tradeoffs involved in running a real-time media application on a Pentium system that would use AC'97 audio if you were lucky.

During the early '00s, Microsoft invested in fax as an OS feature in Windows, although I don't think it ever received that much use. It was already too late for voice modems, though, and Windows support for voice modems (and TAPI) generally declined after XP. On the Windows Server side, NT4's TAPI could provide a fairly complete IVR system with Windows alone, but the potential of COM-based TAPI business phone systems got wrapped up in Microsoft's Unified Communications craze and then died along with it.

Voice modems surely lasted longer in business applications, particularly since a number of business products like PABXs actually implemented V.253 as a bridge for TAPI applications. Quite a few voice-modem-based IVR products are still for sale, suggesting that they have enduring users. Of course, the more actively developed of them now all offer VoIP capabilities as well. Practically speaking, SIP has completely replaced the role of the voice modem, and it says something about voice modems that even with its many idiosyncrasies SIP is the simpler and better supported path.

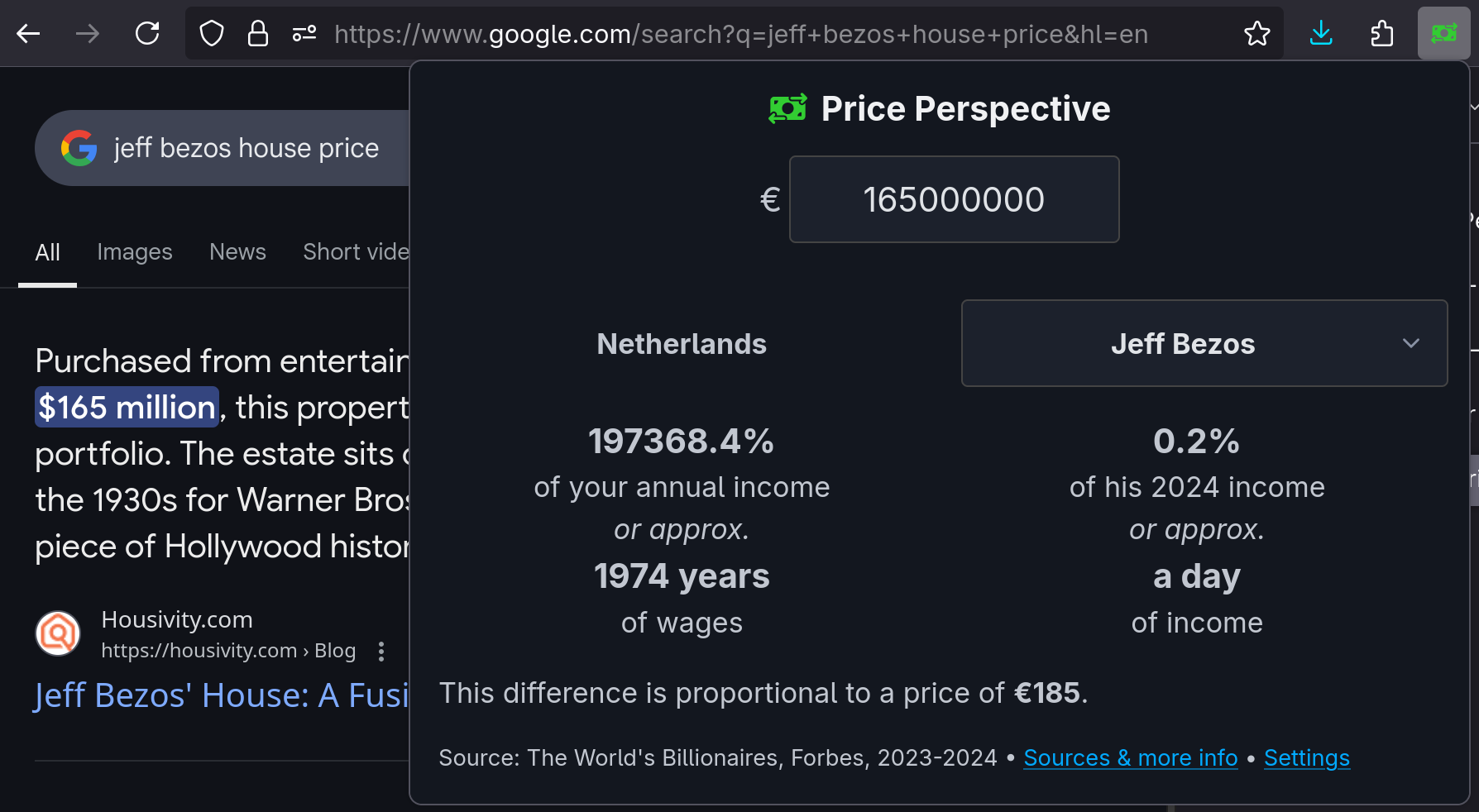

And now, we come back to our modern era. In the course of researching voice modems, I ran into a 2017 blog post, The sad state of voice support in cellular modems. The author, Harald Welte, laments that USB-attached modems do not present a USB audio device, but "instead, what some modem vendors have implemented as an ugly hack is the transport of 8kHz 16bit PCM samples over one of the UARTs."

In the full light of history, we realize that what Welte describes as an "ugly hack" is, in fact, exactly the way it has always worked. In 1981, Hayes introduced the Smartmodem, a standalone device that abstracted every part of telephony into a serial channel and a simple command format. In 1992, Rockwell made the modem speak, by putting PCM audio over that same serial channel. In 2017, very little had changed—Welte's post has aged poorly only in that many modern phones have the modem more fully integrated into the SOC and use a completely proprietary interface instead of the traditional serial channel.

Of course, that's for smartphone manufacturers, who have the extensive engineering department and the close relationship with their chipmakers to implement such proprietary interfaces. For the rest of us, with our USB modems (now universally USB, even when packaged in m.2 form), the modem is still a black box with a serial port. The computer does not participate in a phone call, that's the modem's job. If you want to say something, you better PCM encode it and send it to the TTY.

-

It is very common to refer to this component of a smartphone, the actual RF and cellular implementation, as the "baseband." Baseband is an overloaded term in telephony and the connection between the word's original meaning and its use to refer to a smartphone component is so indirect as to verge on rhyming slang, so I will simply call it the "cellular modem" for clarity.↩

-

Mixing arbitrary communications data (the bearer channel) with control commands on a single serial channel created a heap of problems around escaping and mode switching that were never fully resolved. Consider, for example, that Rockwell modems used ASCII DLE as an escape character despite the fact that the 0x10 byte could, and would, appear in PCM audio data. That meant that any instance of 0x10 in the audio data had to be replaced with 0x1010 (DLE escaping itself to produce a literal DLE), at the cost of some bandwidth overhead and implementation complexity. And that's simple and elegant compared to how the same problem was handled for data mode, with the infamous "+++" sequence. The result was that modem manufacturers started separating the bearer and control channels into two different UARTs as soon as it became feasible. I will save more depth on this for a future post but modern LTE modems, depending on configuration (and this is indeed configurable), may present as many as 7 separate UARTs for different purposes. You only need one of them, but using separate UARTs instead of switching modes on the primary one saves a whole lot of headache.↩

-

Some sound cards in this era had a connector for a jumper wire to a telephone modem, so that the sound card could exchange audio with the modem. That allowed you to set up the same logical architecture as integrated modem/ sound cards, but with two different cards. It's amusing that Creative provided such a connector with an integrated modem/sound card, but they explain that you might want to use it so that you can use your computer as a speaker phone without having to have a second set of speakers plugged into the Modem Blaster. Yes, in practice, things got pretty ugly. You could come up with an elegant setup but it would take some doing.↩