cash issuing terminals

In the United States, we are losing our fondness for cash. As in many other countries, cards and other types of electronic payments now dominate everyday commerce. To some, this is a loss. Cash represented a certain freedom from intermediation, a comforting simplicity that you just don't get from Visa. It's funny to consider, then, how cash is in fact quite amenable to automation. Even Benjamin Franklin's face on a piece of paper can feel like a mere proxy for a database transaction. How different is cash itself from "e-cash", when it starts and ends its lifecycle through automation?

Increasing automation of cash reflects the changing nature of banking: decades ago, a consumer might have interacted with banking primarily through a "passbook" savings account, where transactions were so infrequent that the bank recorded them directly in the patron's copy of the passbook. Over the years, increasing travel and nationwide communications led to the ubiquitous use of inter-bank money transfers, mostly in the form of the check. The accounts that checks typically drew on—checking accounts—were made for convenience and ease of access. You might deposit your entire paycheck into an account—it might even be sent there automatically—and then when you needed a little walking around money, you would withdraw cash by the assistance of a teller. By the time I was a banked consumer, even the teller was mostly gone. Today, we get our cash from machines so that it can be deposited into other machines.

Cash handling is fraught with peril. Bills are fairly small and easy to hide, and yet quite valuable. Automation in the banking world first focused on solving this problem, of reliable and secure cash handling within the bank branch. The primary measure against theft by insiders was that the theft would be discovered, as a result of the careful bookkeeping that typifies banks. But, well, that bookkeeping was surprisingly labor-intensive in even the bank of the 1950s.

Histories of the ATM usually focus on just that: the ATM. It's an interesting story, but one that I haven't been particularly inclined to cover due to the lack of a compelling angle. Let's try IBM. IBM is such an important, famous player in business automation that it forms something of a synecdoche for the larger industry. Even so, in the world of bank cash handling, IBM's efforts ultimately failed... a surprising outcome, given their dominance in the machines that actually did the accounting.

In this article, we'll examine the history of ATMs—by IBM. IBM was just one of the players in the ATM industry and, by its maturity, not even one of the more important ones. But the company has a legacy of banking products that put the ATM in a more interesting context, and despite lackluster adoption of later IBM models, their efforts were still influential enough that later ATMs inherited some of IBM's signature design concepts. I mean that more literally than you might think. But first, we have to understand where ATMs came from. We'll start with branch banking.

When you open a bank account, you typically do so at a "branch," one of many physical locations that a national bank maintains. Let us imagine that you are opening an account at your local branch of a major bank sometime around 1930; whether before or after that year's bank run is up to you. Regardless of the turbulent economic times, the branch became responsible for tracking the balance of your account. When you deposit money, a teller writes up a slip. When you come back and withdraw money, a different teller writes up a different slip. At the end of each business day, all of these slips (which basically constitute a journal in accounting terminology) have to be rounded up by the back office and posted to the ledger for your account, which was naturally kept as a card in a big binder.

A perfectly practicable 1930s technology, but you can already see the downsides. Imagine that you appear at a different branch to withdraw money from your account. Fortunately, this was not very common at the time, and you would be more likely to use other means of moving money in most scenarios. Still, the bank tries to accommodate. The branch at which you have appeared can dispense cash, write a slip, and then send it to the correct branch for posting... but they also need to post it to their own ledger that tracks transactions for foreign accounts, since they need to be able to reconcile where their cash went. And that ignores the whole issue of who you are, whether or not you even have an account at another branch, and whether or not you have enough money to cover the withdrawal. Those are problems that, mercifully, could mostly be sorted out with a phone call to your home branch.

Bank branches, being branches, do not exist in isolation. The bank also has a headquarters, which tracks the finances of its various branches—both to know the bank's overall financial posture (critical considering how banks fail), and to provide controls against insider theft. Yes, that means that each of the branch banks had to produce various reports and ledger copies and then send them by courier to the bank headquarters, where an army of clerks in yet another back office did yet another round of arithmetic to produce the bank's overall ledgers.

As the United States entered World War II, an expanding economy, rapid industrial buildup, and a huge increase in national mobility (brought on by things like the railroads and highways) caused all of these tasks to occur on larger and larger scales. Major banks expanded into a tiered system, in which branches reported their transactions to "regional centers" for reconciliation and further reporting up to headquarters. The largest banks turned to unit record equipment or "business machines," arguably the first form of business computing: punched card machines that did not evaluate programs, but sorted and summed.

Simple punched card equipment gave way to more advanced automation, innovations like the "posting machine." These did exactly what they promised: given a stack of punched cards encoding transactions, they produced a ledger with accurately computed sums. Specialized posting machines were made for industries ranging from hospitality (posting room service and dining charges to room folios) to every part of finance, and might be built custom to the business process of a large customer.

If tellers punched transactions into cards, the bank could come much closer to automation by shipping the cards around for processing at each office. But then, if transactions are logged in a machine readable format, and then processed by machines, do we really need to courier them to rooms full of clerks?

Well, yes, because that was the state of technology in the 1930s. But it would not stay that way for long.

In 1950, Bank of America approached SRI about the feasibility of an automated check processing system. Use of checks was rapidly increasing, as were total account holders, and the resulting increase in inter-branch transactions was clearly overextending BoA's workforce—to such an extent that some branches were curtailing their business hours to make more time for daily closing. By 1950, computer technology had advanced to such a state that it was obviously possible to automate this activity, but it still represented one of the most ambitious efforts in business computing to date.

BoA wanted a system that would not only automate the posting of transactions prepared by tellers, but actually automate the handling of the checks themselves. SRI and, later, their chosen manufacturing partner General Electric ran a multi-year R&D campaign on automated check handling that ultimately led to the design of the checks that we use today: preprinted slips with account holder information, and account number, already in place. And, most importantly, certain key fields (like account number and check number) represented in a newly developed machine-readable format called "MICR" for magnetic ink character recognition. This format remains in use today, to the extent that checks remain in use, although as a practical matter MICR has given way to the more familiar OCR (aided greatly by the constrained and standardized MICR character set).

The machine that came out of this initiative was called ERMA, the Electronic Recording Machine, Accounting. I will no doubt one day devote a full article to ERMA, as it holds a key position in the history of business computing while also managing to not have much of a progeny due to General Electric's failure to become a serious contender in the computer industry. ERMA did not lead to a whole line of large-scale "ERM" business systems as GE had hoped, but it did firmly establish the role of the computer in accounting, automate parts of the bookkeeping through almost the entirety of what would become the nation's largest bank, and inspire generations of products from other computer manufacturers.

The first ERMA system went into use in 1959. While IBM was the leader in unit record equipment and very familiar to the banking industry, it took a few years for Big Blue to bring their own version. Still, IBM had their own legacy to build on, including complex electromechanical machines that performed some of the tasks that ERMA was taking over. Since the 1930s, IBM had produced a line of check processing or "proofing" machines. These didn't exactly "automate" check handling, but they did allow a single operator to handle a lot of documents.

The IBM 801, 802, and 803 line of check proofers used what were fundamentally unit record techniques—keypunch, sorting bins, mechanical totalizers—to present checks one at a time in front of the operator, who read information like the amount, account number, and check number off of the paper slip and entered it on a keypad. The machine then whisked the check away, printing the keyed data (and reference numbers for auditing) on the back of the check, stamped an endorsement, added the check's amounts to the branch's daily totals (including subtotals by document type), and deposited the check in an appropriate sorter bin to be couriered to the drawer's bank. While all this happened, the machines also printed the keyed check information and totals onto paper tapes.

By the early 1960s, with ERMA on the scene, IBM started to catch up. Subsequent check processing systems gained support for MICR, eliminating much (sometimes all!) of the operator's keying. Since the check proofing machines could also handle deposit slips, a branch that generated MICR-marked deposit slips could eliminate most of the human touchpoints involved in routine banking. A typical branch bank setup might involve an IBM 1210 document reader/sorter machine connected by serial channel to an IBM 1401 computer. This system behaved much like the older check proofers, reading documents, logging them, and calculating totals. But it was now all under computer control, with the flexibility and complexity that entails.

One of these setups could process almost a thousand checks a minute with a little help from an operator, and adoption of electronic technology at other stages made clerks lives easier. For example, IBM's mid-1960s equipment introduced solid-state memory. The IBM 1260 was used for adding machine-readable MICR data to documents that didn't already have it. Through an innovation that we would now call a trivial buffer, the 1260's operator could key in the numbers from the next document while the printer was still working on the previous.

Along with improvements in branch bank equipment came a new line of "high-speed" systems. In a previous career, I worked at a Federal Reserve bank, where "high-speed" was used as the name of a department in the basement vault. There, huge machines processed currency to pick out bad bills. This use of "high-speed" seems to date to an IBM collaboration with the Federal Reserve to build machines for central clearinghouses, handling checks by the tens of thousands. By the time I found myself in central banking, the use of "high-speed" machinery for checks was a thing of the past—"digital substitute" documents or image-based clearing having completely replaced physical handling of paper checks. Still, the "high-speed" staff labored on in their ballistic glass cages, tending to the green paper slips that the institution still dispenses by the millions.

One of the interesting things about the ATM is when, exactly, it pops up in the history of computers. We are, right now, in the 1960s. The credit card is in its nascent stages, MasterCard's predecessor pops up in 1966 to compete with Bank of America's own partially ERMA-powered charge card offering. With computer systems maintaining account sums, and document processing machines communicating with bookkeeping computers in real-time, it would seem that we are on the very cusp of online transaction authorization, which must be the fundamental key to the ATM. ATMs hand out cash, and one thing we all know about cash is that, once you give yours to someone else you are very unlikely to get it back. ATMs, therefore, must not dispense cash unless they can confirm that the account holder is "good for it." Otherwise the obvious fraud opportunity would easily wipe out the benefits.

So, what do you do? It seems obvious, right? You connect the ATM to the bookkeeping computer so it can check account balances before dispensing cash. Simple enough.

But that's not actually how the ATM evolved, not at all. There are plenty of reasons. Computers were very expensive so banks centralized functions and not all branches had one. Long-distance computer communication links were very expensive as well, and still, in general, an unproven technology. Besides, the computer systems used by banks were fundamentally batch-mode machines, and it was difficult to see how you would shove an ATM's random interruptions into the programming model.

Instead, the first ATMs were token-based. Much like an NYC commuter of the era could convert cash into a subway token, the first ATMs were machines that converted tokens into cash. You had to have a token—and to get one, you appeared at a teller during business hours, who essentially dispensed the token as if it were a routine cash withdrawal.

It seems a little wacky to modern sensibilities, but keep in mind that this was the era of the traveler's check. A lot of consumers didn't want to carry a lot of cash around with them, but they did want to be able to get cash after hours. By seeing a teller to get a few ATM tokens (usually worth $10 or £10 and sometimes available only in that denomination), you had the ability to retrieve cash, but only carried a bank document that was thought (due to features like revocability and the presence of ATMs under bank surveillance) to be relatively secure against theft. Since the tokens were later "cleared" against accounts much like checks, losing them wasn't necessarily a big deal, as something analogous to a "stop payment" was usually possible.

Unlike subway tokens, these were not coin-shaped. The most common scheme was a paper card, often the same dimensions as a modern credit card, but with punched holes that encoded the denomination and account holder information. The punched holes were also viewed as an anti-counterfeiting measure, probably not one that would hold up today, but still a roadblock to fraudsters who would have a hard time locating a keypunch and a valid account number. Manufacturers also explored some other intriguing opportunities, like the very first production cash dispenser, 1967's Barclaycash machine. This proto-ATM used punched paper tokens that were also printed in part with a Carbon-14 ink. Carbon-14 is unstable and emits beta radiation, which the ATM detected with a simple electrostatic sensor. For some reason difficult to divine the radioactive ATM card did not catch on.

For roughly the first decade of the "cash machine," they were offline devices that issued cash based on validating a token. The actual decision making, on the worthiness of a bank customer to withdraw cash, was still deferred to the teller who issued the tokens. Whether or not you would even consider this an ATM is debatable, although historical accounts generally do. They are certainly of a different breed than the modern online ATM, but they also set some of the patterns we still follow. Consider, for example, the ATMs within my lifespan that accepted deposits in an envelope. These ATMs did nothing with the envelopes other than accumulate them into a bin to go to a central processing center later on—the same way that early token-based ATMs introduced deposit boxes.

In this theory of ATM evolution, the missing link that made 1960s–1970s ATMs so primitive was the lack of computer systems that were amenable to real-time data processing using networked peripherals. The '60s and '70s were a remarkable era in computer history, though, seeing the introduction of IBM's System/360 and System/370 line. These machines were more powerful, more flexible, and more interoperable than any before them. I think it's fair to say that, despite earlier dabbling, it was the 360/370 that truly ushered in the era of business computing. Banks didn't miss out.

One of the innovations of the System/360 was an improved and standardized architecture for the connection of peripherals to the machine. While earlier IBM models had supported all kinds of external devices, there was a lot of custom integration to make that happen. With the System/360, this took the form of "Bisync," which I might grandly call a far ancestor of USB. Bisync allowed a 360 computer to communicate with multiple peripherals connected to a common multi-drop bus, even using different logical communications protocols. While the first Bisync peripherals were "remote job entry" terminals for interacting with the machine via punched cards and teletype, IBM and other manufacturers found more and more applications in the following years.

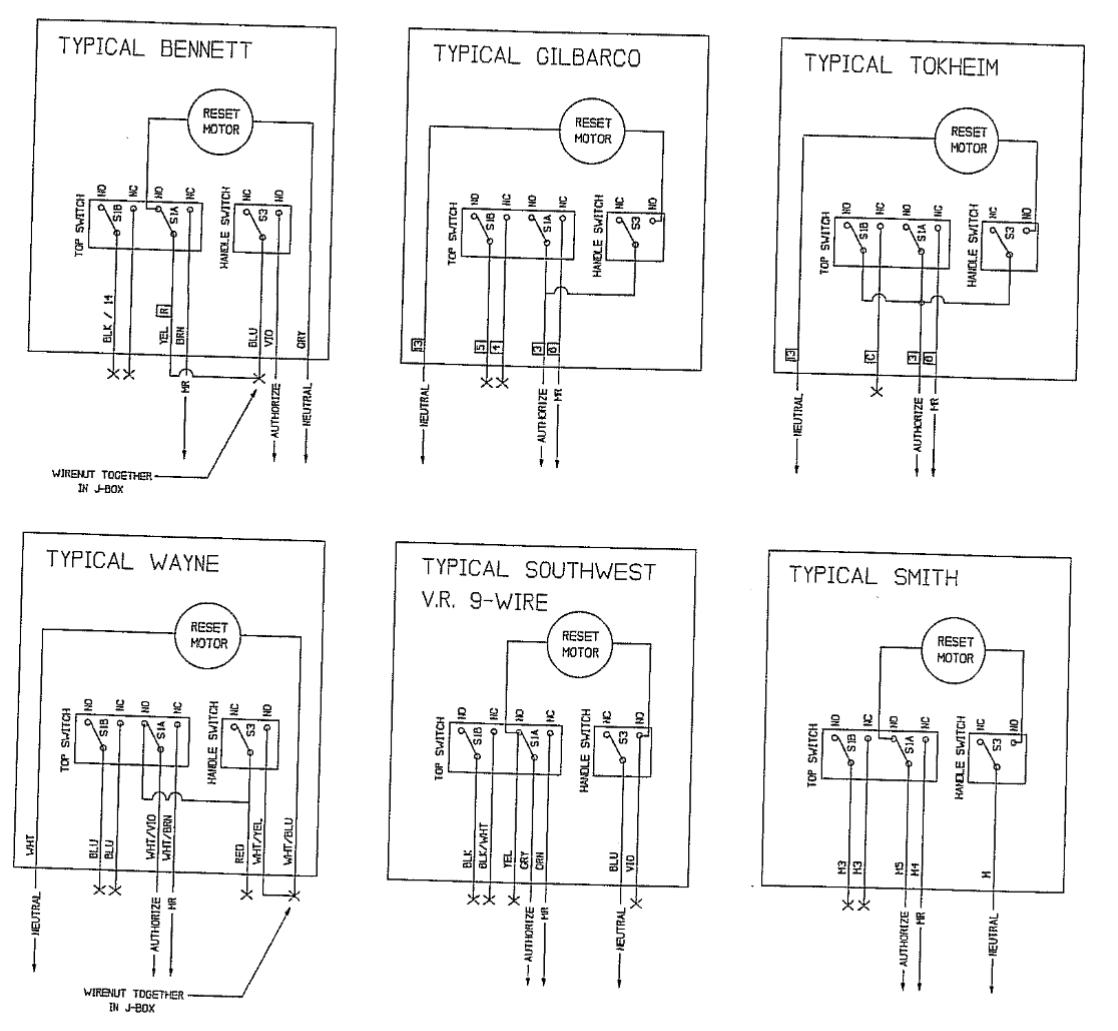

IBM had already built document processing machines that interacted with their computers. In 1971, IBM joined the credit card fray with the 2730, a "transaction" terminal that we would now recognize as a credit card reader. It used a Bisync connection to a System/360-class machine to authorize a credit transaction in real time. The very next year, IBM took the logical next step: the IBM 2984 Cash Issuing Terminal. Like many other early ATMs, the 2984 had its debut in the UK as Lloyds Bank's "Cashpoint."

The 2984 similarly used Bisync communications with a System/360. While not the very first implementation of the concept, the 2984 was an important step in ATM security and the progenitor of an important line of cryptographic algorithms. To withdraw cash, a user inserted a magnetic card that contained an account number, and then keyed in a PIN. The 2984 sent this information, over the Bisync connection, to the computer, which then responded with a command such as "dispense cash." In some cases, the computer was immediately on the other side of the wall, but it was already apparent that banks would install ATMs in remote locations controlled via leased telephone lines—and those telephone lines were not well-secured. A motivated attacker (and with cash involved, it's easy to be motivated!) could probably "tap" the ATM's network connection and issue it spurious "dispense cash" commands. To prevent this problem, and assuage the concerns of bankers who were nervous about dispensing cash so far from the branch's many controls, IBM decided to encrypt the network connection.

The concept of an encrypted network connection was not at all new; encrypted communications were widely used in the military during the second World War and the concept was well-known in the computer industry. As IBM designed the 2984, in the late '60s, encrypted computer links were nonetheless very rare. There were not yet generally accepted standards, and cryptography as an academic discipline was immature.

IBM, to secure the 2984's network connection, turned to an algorithm recently developed by an IBM researcher named Horst Feistel. Feistel, for silly reasons, had named his family of experimental block ciphers LUCIFER. For the 2984, a modified version of one of the LUCIFER implementations called DSD-11. Through a Bureau of Standards design competition and the twists and turns of industry politics, DSD-1 later reemerged (with just slight changes) as the Data Encryption Standard, or DES. We owe the humble ATM honors for its key role in computer cryptography.

The 2984 was a huge step forward. Unlike the token-based machines of the 1960s, it was pretty much the same as the ATMs we use today. To use a 2984, you inserted your ATM card and entered a PIN. You could then choose to check your balance, and then enter how much cash you wanted. The machine checked your balance in real time and, if it was high enough, debited your account immediately before coughing up money.

The 2984 was not as successful as you might expect. The Lloyd's Bank rollout was big, but very few were installed by other banks. Collective memory of the 2984 is vague enough that I cannot give a definitive reason for its limited success, but I think it likely comes down to a common tale about IBM: price and flexibility. The 2984 was essentially a semi-custom peripheral, designed for Lloyd's Bank and the specific System/360 environment already in place there. Adoption for other banks was quite costly. Besides, despite the ATM's lead in the UK, the US industry had quickly caught up. By the time the 2984 would be considered by other banks, there were several different ATMs available in the US from other manufacturers (some of them the same names you see on ATMs today). The 2984 is probably the first "modern" ATM, but since IBM spent 4-5 years developing it, it was not as far ahead of the curve on launch day as you might expect. Just a year or two later, a now-forgotten company called Docutel was dominating the US market, leaving IBM little room to fit in.

Because most other ATMs were offered by companies that didn't control the entire software stack, they were more flexible, designed to work with simpler host support. There is something of an inverse vertical integration penalty here: when introducing a new product, close integration with an existing product family makes it difficult to sell! Still, it's interesting that the 2984 used pretty much the same basic architecture as the many ATMs that followed. It's worth reflecting on the 2984's relationship with its host, a close dependency that generally holds true for modern ATMs as well.

The 2984 connected to its host via a Bisync channel (possibly over various carrier or modem systems to accommodate remote ATMs), a communications facility originally provided for remote job entry, the conceptual ancestor of IBM's later block-oriented terminals. That means that the host computer expected the peripheral to provide some input for a job and then wait to be sent the results. Remote job entry devices, and block terminals later, can be confusing when compared to more familiar Unix-family terminals. In some ways, they were quite sophisticated, with the host computer able to send configuration information like validation rules for input. In other ways, they were very primitive, capable of no real logic other than receiving computer output (which was dumped to cards, TTY, or screen) and then sending computer input (from much the same devices). So, the ATM behaved the same way.

In simple terms, the ATM's small display (called a VDU or Video Display Unit in typical IBM terminology) showed whatever the computer sent as the body of a "display" command. It dispensed whatever cash the computer indicated with a "dispense cash" command. Any user input, such as reading a card or entry of a PIN number, was sent directly to the computer. The host was responsible for all of the actual logic, and the ATM was a dumb terminal, just doing exactly what the computer said. You can think of the Cash Issuing Terminal as, well, just that: a mainframe terminal with a weird physical interface.

Most modern ATMs follow this same model, although the actual protocol has become more sophisticated and involves a great deal more XML. You can be reassured that when the ATM takes a frustratingly long time to advance to the next screen, it is at least waiting to receive the contents of that screen from a host computer that is some distance away or, even worse, in The Cloud.

Incidentally, you might wonder about the software that ran on the host computer. I believe that the IBM 2984 was designed for use with CICS, the Customer Information Control System. CICS will one day get its own article, but it launched in 1966, built specifically for the Michigan Bell to manage customer and billing data. Over the following years, CICS was extensively expanded for use in the utility and later finance industries. I don't think it's inaccurate to call CICS the first "enterprise customer relationship management system," the first voyage in an adventure that took us through Siebel before grounding on the rocks of Salesforce. Today we wouldn't think of a CRM as the system of record for depository finance institutions like banks, but CICS itself was very finance-oriented from the start (telephone companies sometimes felt like accounting firms that ran phones on the side) and took naturally to gathering transactions and posting them against customer accounts. Since CICS was designed as an online system to serve telephone and in-person customer service reps (in fact making CICS a very notable early real-time computing system), it was also a good fit for handling ATM requests throughout the day.

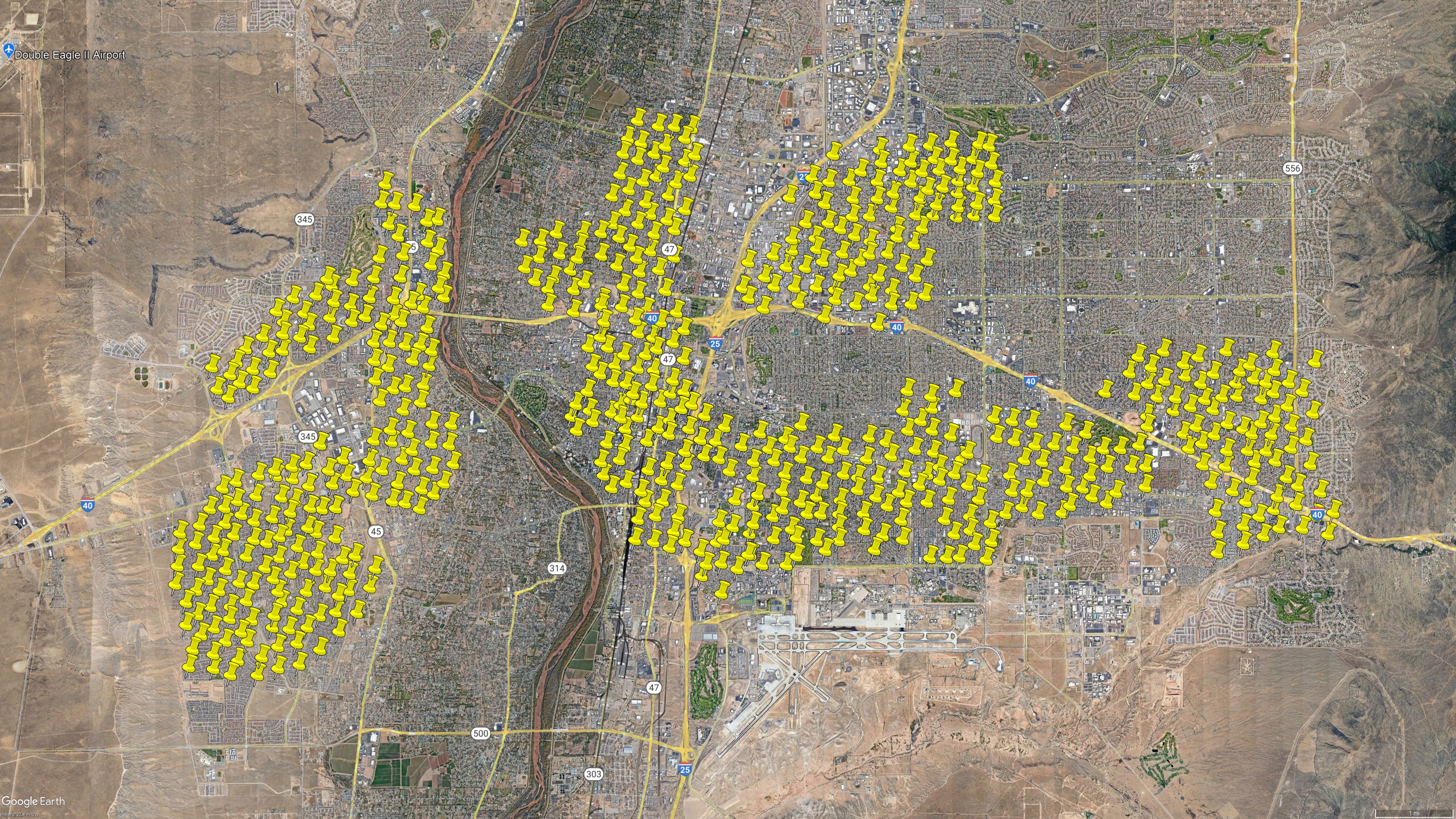

I put a lot of time into writing this, and I hope that you enjoy reading it. If you can spare a few dollars, consider supporting me on ko-fi. You'll receive an occasional extra, subscribers-only post, and defray the costs of providing artisanal, hand-built world wide web directly from Albuquerque, New Mexico.

Despite the 2984's lackluster success, IBM moved on. I don't think IBM was particularly surprised by the outcome, the 2984 was always a "request quotation" (e.g. custom) product. IBM probably regarded it as a prototype or pilot with their friendly customer Lloyds Bank. More than actual deployment, the 2984's achievement was paving the way for the IBM 3614 Consumer Transaction Facility.

In 1970, IBM had replaced the System/360 line with the System/370. The 370 is directly based on the 360 and uses the same instruction set, but it came with numerous improvements. Among them was a new approach to peripheral connectivity that developed into the IBM Systems Network Architecture, or SNA, basically IBM's entry into the computer networking wars of the 1970s and 1980s. While SNA would ultimately cede to IP (with, naturally, an interregnum of SNA-over-IP), it gave IBM the foundations for networked systems that are almost modern in their look and feel.

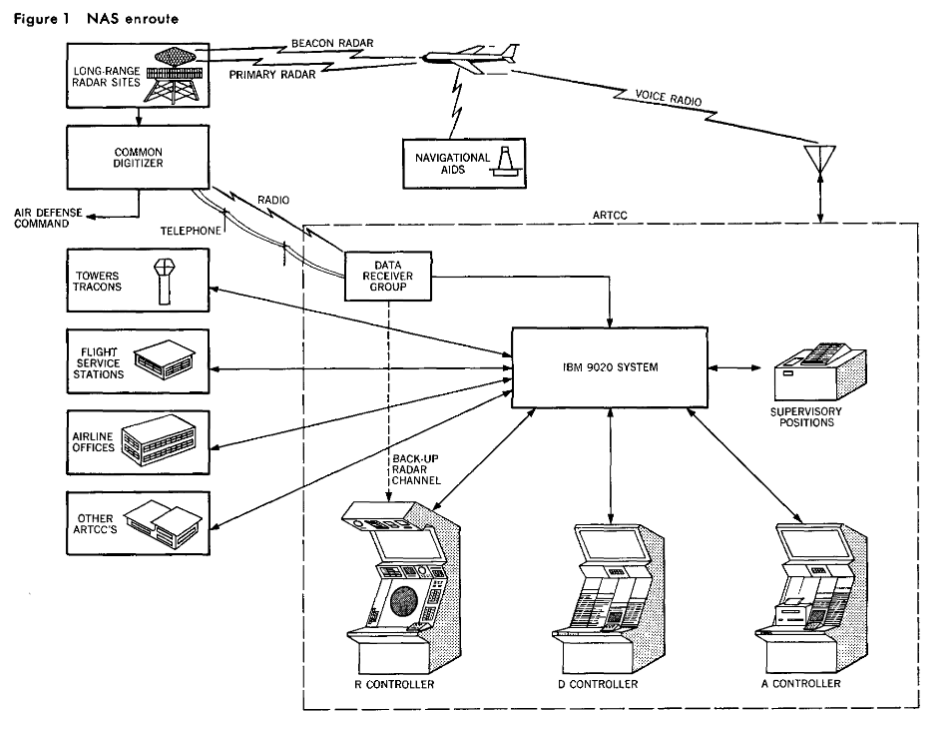

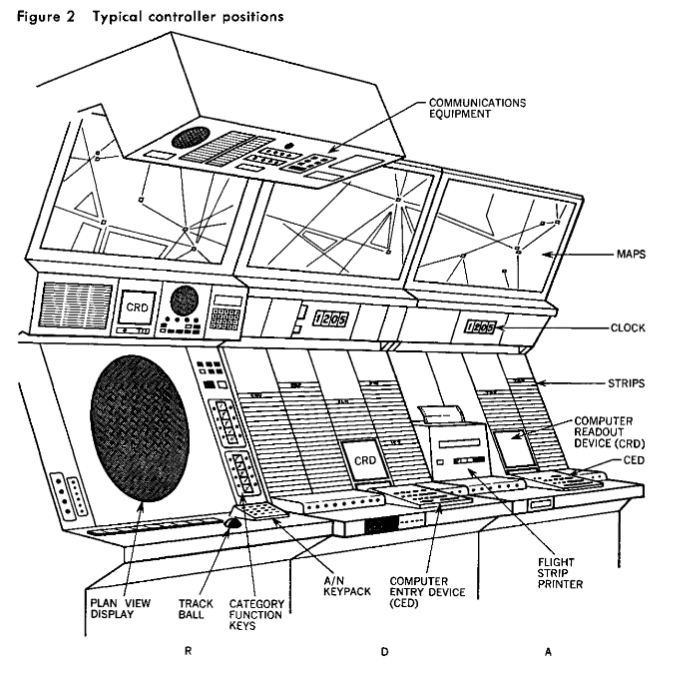

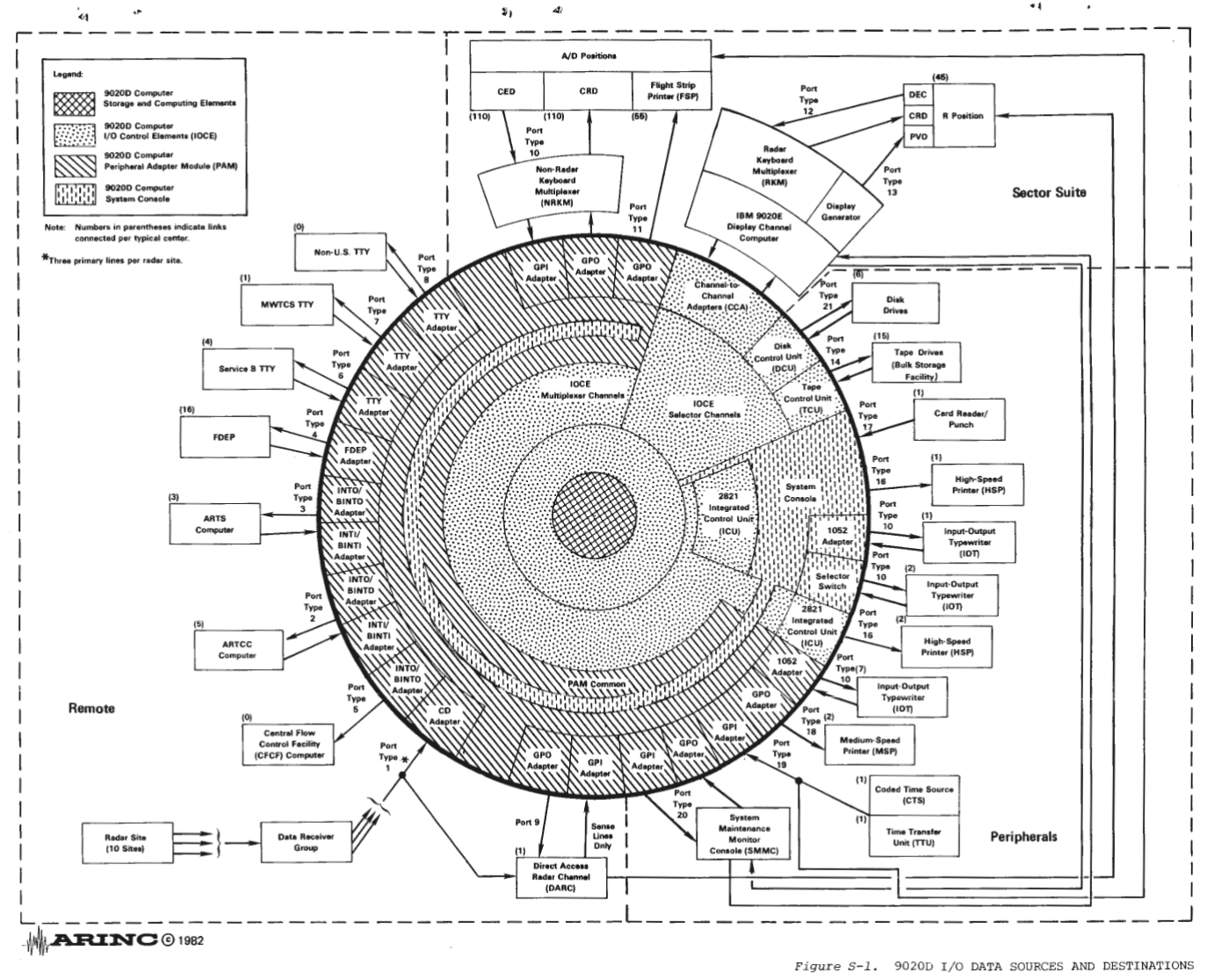

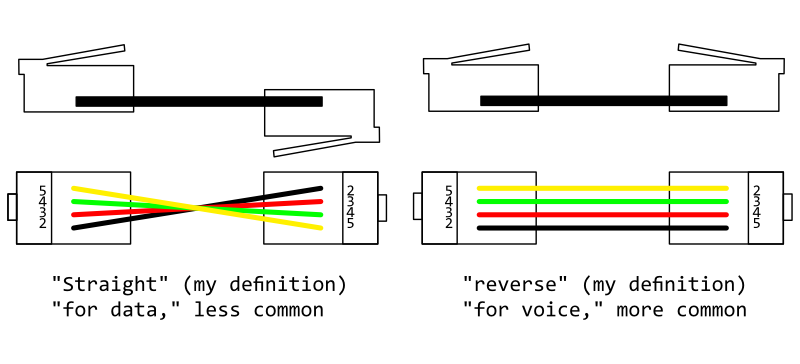

I say almost because SNA was still very much a mainframe-oriented design. An example SNA network might look like this: An S/370 computer running CICS (or one of several other IBM software packages with SNA support) is connected via channel (the high-speed peripheral bus on mainframe computers, analogous to PCI) to an IBM 3705 Communications Controller running the Network Control Program (analogous to a network interface controller). The 3705 had one or more "scanners" installed, which supported simple low-speed serial lines or fast, high-level protocols like SDLC (synchronous data link control) used by SNA. The 3705 fills a role sometimes called a "front-end processor," doing the grunt work of polling (scanning) communications lines and implementing the SDLC protocol so that the "actual computer" was relieved of these menial tasks.

At the other end of one of the SDLC links might be an IBM 3770 Data Communications System, which was superficially a large terminal that, depending on options ordered, could include a teletypewriter, card reader and punch, diskette drives, and a high speed printer. Yes, the 3770 is basically a grown-up remote job entry terminal, and the SNA/SDLC stack was a direct evolution from the Bisync stack used by the 2984. The 3770 had a bit more to offer, though: in order to handle its multiple devices, like the printer and card punch, it acted as a sort of network switch—the host computer identified the 3770's devices as separate endpoints, and the 3770 interleaved their respective traffic. It could also perform that interleaving function for additional peripherals connected to it by serial lines, which depending on customer requirements often included additional card punches and readers for data entry, or line printers for things like warehouse picking slips.

In 1973, IBM gave banks the SNA treatment with the 3600 Finance Communication System 2. A beautifully orange brochure tells us:

The IBM 3600 Finance Communication System is a family of products designed to provide the Finance Industry with remote on-line teller station operation.

System/370 computers represented an enormous investment, generally around a million dollars and more often above that point than below. They were also large and required both infrastructure and staff to support them. Banks were already not inclined to install an S/370 in each branch, so it became a common pattern to place a "full-size" computer like an S/370 in a central processing center to support remote peripherals (over leased telephone line) in branches. The 3600 was a turn-key product line for exactly this use.

An S/370 computer with a 3704 or 3705 running the NCP would connect (usually over a leased line) to a 3601 System, which IBM describes as a "programmable communications controller" although they do not seem to have elevated that phrase to a product name. The 3601 is basically a minicomputer of its own, with up to 20KB of user-available memory and diskette drive. A 3601 includes, as standard, a 9600 bps SDLC modem for connection to the host, and a 9600 bps "loop" interface for a local multidrop serial bus. For larger installations, you could expand a 3601 with additional local loop interfaces or 4800 or 9600 bps modems to extend the local loop interface to a remote location via telephone line.

In total, a 3601 could interface up to five peripheral loops with the host computer over a single interleaved SDLC link. But what would you put on those peripheral loops? Well, the 3604 Keyboard Display Unit was the mainstay, with a vacuum fluorescent display and choice of "numeric" (accounting, similar to a desk calculator) or "data entry" (alphabetic) keyboard. A bank would put one of these 3604s in front of each teller, where they could inquire into customer accounts and enter transactions. In the meantime, 3610 printers provided general-purpose document printing capability, including back-office journals (logging all transactions) or filling in pre-printed forms such as receipts and bank checks. Since the 3610 was often used as a journal printer, it was available with a take-up roller that stored the printed output under a locked cover. In fact, basically every part of the 3600 system was available with a key switch or locking cover, a charming reminder of the state of computer security at the time.

The 3612 is a similar printer, but with the addition of a dedicated passbook feature. Remember passbook savings accounts, where the bank writes every transaction in a little booklet that the customer keeps? They were still around, although declining in use, in the 1970s. The 3612 had a slot on the front where an appropriately formatted passbook could be inserted, and like a check validator or slip printer, it printed the latest transaction onto the next empty line. Finally, the 3618 was a "medium-speed" printer, meaning 155 lines per minute. A branch bank would probably have one, in the back office, used for printing daily closing reports and other longer "administrative" output.

A branch bank could carry out all of its routine business through the 3600 system, including cash withdrawals. In fact, since a customer withdrawing cash would end up talking to a teller who simply keyed the transaction into a 3604, it seems like a little more automation could make an ATM part of the system.

Enter the 3614 Consumer Transaction Facility, the first IBM ATM available as a regular catalog item. The 3614 is actually fairly obscure, and doesn't seem to have sold in large numbers. Some sources suggest that it was basically the same as the 2984, but with a general facelift and adaptations to connect to a 3601 Finance Communication Controller instead of directly to a front-end processor. Some features which were optional on the 2984, like a deposit slot, were apparently standard on 3614. I'm not even quite sure when the 3614 was introduced, but based on manual copyright dates they must have been around by 1977.

One of the reasons the 3614 is obscure is that its replacement, the IBM 3624 Consumer Transaction Facility, hit the market in 1978—probably very shortly after the 3614. The 3624 was functionally very similar to the 3614, but with maintainability improvements like convenient portable cartridges for storing cash. It also brought a completely redesigned front panel that is more similar to modern ATMs. I should talk about the front panels—the IBM ATMs won a few design awards over their years, and they were really very handsome machines. The backlit logo panel and function-specific keys of the 3624 look more pleasant to use than most modern ATMs, although they would, of course, render translation difficult.

The 3614/3624 series established a number of conventions that are still in use today. For example, they added an envelope deposit system in which the machine accepted an envelope (with cash or checks) and printed a transaction identifier on the outside of the envelope for lookup at the processing center. This relieved the user of writing up a deposit slip when using the ATM. It was also capable of not only reading but, optionally, writing to the magnetic strips on ATM cards. To the modern reader that sounds strange, but we have to discuss one of the most enduring properties of the 3614/3624: their handling of PIN numbers.

I believe the 2984 did something fairly similar, but the details are now obscure (and seem to get mixed up with its use of LUCIFER/DSD-1/DES for communications). The 3614/3624, though, so firmly established a particular approach to PIN numbers that it is now known as the 3624 algorithm. Here's how it works: the ATM reads the card number (called Primary Account Number or PAN) off of the ATM card, reads a key from memory, and then applies a convoluted cryptographic algorithm to calculate an "intermediate PIN" from it. The "intermediate PIN" is then summed with a "PIN offset" stored on the card itself, modulo 10, to produce the PIN that the user is actually expected to enter. This means that your "true" PIN is a static value calculated from your card number and a key, but as a matter of convenience, you can "set" a PIN of your choice by using an ATM that is equipped to rewrite the PIN offset on your card. This same system, with some tweaks and a lot of terminological drift, is still in use today. You will sometimes hear IBM's intermediate PIN called the "natural PIN," the one you get with an offset of 0, which is a use of language that I find charming.

Another interesting feature of the 3624 was a receipt printer—I'm not sure if it was the first ATM to offer a receipt, but it was definitely an early one. The exact mechanics of the 3624 receipt printer are amusing and the result of some happenstance at IBM. Besides its mainframes and their peripherals, IBM in the 1970s was increasingly invested in "midrange computers" or "midcomputers" that would fill in a space between the mainframe and minicomputer—and, most importantly, make IBM more competitive with the smaller businesses that could not afford IBM's mainframe systems and were starting to turn to competitors like DEC as a result. These would eventually blossom into the extremely successful AS/400 and System i, but not easily, and the first few models all suffered from decidedly soft sales.

For these smaller computers, IBM reasoned that they needed to offer peripherals like card punches and readers that were also smaller. Apparently following that line of thought to a misguided extent, IBM also designed a smaller punch card: the 96-column three-row card, which was nearly square. The only computer ever to support these cards was the very first of the midrange line, the 1969 System/3. One wonders if the System/3's limited success led to excess stock of 96-column card equipment, or perhaps they just wanted to reuse tooling. In any case, the oddball System/3 card had a second life as the "Transaction Statement Printer" on the 3614 and 3624. The ATM could print four lines of text, 34 characters each, onto the middle of the card. The machines didn't actually punch them, and the printed text ended up over the original punch fields. You could, if you wanted, actually order a 3624 with two printers: one that presented the slip to the customer, and another that retained it internally for bank auditing. A curious detail that would so soon be replaced by thermal receipt printers.

Unlike IBM's ATMs before it, and, as we will see, unlike those after it as well, the 3624 was a hit. While IBM never enjoyed the dominance in ATMs that they did in computers, and companies like NCR and Diebold had substantial market share, the 3624 was widely installed in the late 1970s and would probably be recognized by anyone who was withdrawing cash in that era. The machine had technical leadership as well: NCR built their successful ATM line in part by duplicating aspects of the 3624 design, allowing interoperability with IBM backend systems. Ultimately, as so often happens, it may have been IBM's success that became its undoing.

In 1983, IBM completely refreshed their branch banking solution with the 4700 Finance Communication System. While architecturally similar, the 4700 was a big upgrade. For one, the CRT had landed: the 4700 peripherals replaced several-line VFDs with full-size CRTs typical of other computer terminals, and conventional computer keyboards to boot. Most radically, though, the 4700 line introduced distributed communications to IBM's banking offerings. The 4701 Communications Controller was optionally available with a hard disk, and could be programmed in COBOL. Disk-equipped 4701s could operate offline, without a connection to the host, or in a hybrid mode in which they performed some transactions locally and only contacted the host system when necessary. Local records kept by the 4701 could be automatically sent to the host computer on a scheduled basis for reconciliation.

Along with the 4700 series came a new ATM: the IBM 473x Personal Banking Machines. And with that, IBM's glory days in ATMs came crashing to the ground. The 473x series was such a flop that it is hard to even figure out the model numbers, the 4732 is most often referenced but others clearly existed, including the 4730, 4731, 4736, 4737, and 4738. These various models were introduced from 1983 to 1988, making up almost a decade of IBM's efforts and very few sales. The 4732 had a generally upgraded interface, including a CRT, but a similar feature set—unsurprising, given that the 3624 had already introduced most of the features ATMs have today. It also didn't sell. I haven't been able to find any numbers, but the trade press referred to the 4732 with terms like "debacle," so they couldn't have been great.

There were a few faults in the 4732's stars. First, IBM had made the decision to handle the 4700 Finance Communication System as a complete rework of the 3600. The 4700 controllers could support some 3600 peripherals, but 4700 peripherals could not be used with 3600 controllers. Since 3600 systems were widely installed in banks, the compatibility choice created a situation where many of the 4732's prospective buyers would end up having to replace a significant amount of their other equipment, and then likely make software changes, in order to support the new machine. That might not have been so bad on its own had IBM's competitors not provided another way out.

NCR made their fame in ATMs in part by equipping their contemporary models with 3624 software emulation, making them a drop-in modernization option for existing 3600 systems. Other ATM manufacturers had pursued a path of interoperability, with multiprotocol ATMs that supported multiple hosts, and standalone ATM host products that could interoperate with multiple backend accounting systems. For customers, buying an NCR or Diebold product that would work with whatever they already used was a more appealing option than buying the entire IBM suite in one go. It also matched the development cycle of ATMs better: as a consumer-facing device, ATMs became part of the brand image of the bank, and were likely to see replacement more often than back-office devices like teller terminals. NCR offered something like a regular refresh, while IBM was still in a mode of generational releases that would completely replace the bank's computer systems.

The 4732 and its 473x compatriots became the last real IBM ATMs. After a hiatus of roughly a decade, IBM reentered the ATM market by forming a joint venture with Diebold called InterBold. The basic terms were that Diebold would sell its ATMs in the US, and IBM would sell them overseas, where IBM had generally been the more successful of the two brands. The IBM 478x series ATMs, which you might encounter in the UK for example, are the same as the Diebold 1000 series in the US. InterBold was quite successful, becoming the dominant ATM manufacturer in the US, and in 1998 Diebold bought out IBM's share.

IBM had won the ATM market, and then lost it. Along the way, they left us with so much texture: DES's origins in the ATM, the 3624 PIN format, the dumb terminal or thin client model... even InterBold, IBM's protracted exit, gave us quite a legacy: now you know the reason that so many later ATMs ran OS/2. IBM, a once great company, provided Diebold with their once great operating system. Unlike IBM, Diebold made it successful.

-

Wikipedia calls it DTD-1 for some reason, but IBM sources consistently say DSD-1. I'm not sure if the name changed, if DSD-1 and DTD-1 were slightly different things, or if Wikipedia is simply wrong. One of the little mysteries of the universe.↩

-

I probably need to explain that I am pointedly not explaining IBM model numbers, which do follow various schemes but are nonetheless confusing. Bigger numbers are sometimes later products but not always; some prefixes mean specific things, other prefixes just identify product lines.↩